Friday, July 9, server start

Message boards :

Number crunching :

Friday, July 9, server start

Message board moderation

Previous · 1 · 2 · 3 · Next

| Author | Message |

|---|---|

zoom3+1=4 zoom3+1=4 Send message Joined: 30 Nov 03 Posts: 65773 Credit: 55,293,173 RAC: 49

|

Any news on the limit this week? Still 20 for now? Monday I think, maybe. The T1 Trust, PRR T1 Class 4-4-4-4 #5550, 1 of America's First HST's

|

RottenMutt RottenMutt Send message Joined: 15 Mar 01 Posts: 1011 Credit: 230,314,058 RAC: 0

|

Monday I think, maybe. now your just trying to provoke me. ... limits are: so if you have 28 (28*5=140) or more logical cpu's, it is possible that you could not get any gpu work.

|

rebest rebest  Send message Joined: 16 Apr 00 Posts: 1296 Credit: 45,357,093 RAC: 0

|

First, the good news. All of my uploads went without a hitch. Very cool. Now the bad news. It appears the CPU/GPU differentiation does not work at all for the optimized apps. As predicted, I ran out of GPU work yesterday afternoon. I drew a very lucky hand of Astropulse work on Monday so I still have a number of MB VLAR CPU units left to crunch. Even though my GPU is currently sitting idle, I'm still getting the "Message from server: This computer has reached a limit on tasks in progress". This is, well, annoying. It will be interesting to see when Scheduler sees fit to send me the 40 GPU WU's I'm supposed to have.  Join the PACK! |

perryjay perryjay Send message Joined: 20 Aug 02 Posts: 3377 Credit: 20,676,751 RAC: 0

|

so I still have a number of MB VLAR CPU units left to crunch. Uhhh, Rebest, how big a number of VLARs? If you have more than 140 WUs on your computer you wont get any more until you get them down or the Powers That Be up the quota for us.  PROUD MEMBER OF Team Starfire World BOINC |

|

Rabbit&Carrot Send message Joined: 3 Oct 03 Posts: 25 Credit: 80,178,117 RAC: 0

|

Monday I think, maybe. I am afraid that is true. One of my rigs with a Quad-core CPU and 3 GPU's have for some reason 700 VLAR's and because of the limit on the total number of WU's I cannot receive any GPU WU's. I should either let my GPU's get some rest until Monday or kill 680 VLAR's to get some GPU WU's. |

rebest rebest  Send message Joined: 16 Apr 00 Posts: 1296 Credit: 45,357,093 RAC: 0

|

so I still have a number of MB VLAR CPU units left to crunch. Ah! That's it! Looks like it's going to be long weekend crunching those $#%^$#&^% VLARs or I kill them to get back up to full speed. Too bad these are my only choices.  Join the PACK! |

JohnDK  Send message Joined: 28 May 00 Posts: 1222 Credit: 451,243,443 RAC: 1,127

|

I should either let my GPU's get some rest until Monday or kill 680 VLAR's to get some GPU WU's. I'm now able to download *ONE* GPU WU at the time after I've finished the ones I have. All's vlars except a few vhars slipping through. |

Geek@Play Geek@Play Send message Joined: 31 Jul 01 Posts: 2467 Credit: 86,146,931 RAC: 0

|

I went and inspected the first page of my returned work today. It's all VLAR work. Was interested in how many times they have been issued. 20 wu were issued to 69 client computers before finally being validated. That's an average of 3.45 client computers for each wu. To be fair there were a couple that validated with the first 2 issues but there were at least 4 wu that were issued to 4, 5 and even 6 computers before finally reaching validation. I for one will be happy when the killer app is no longer needed. Boinc....Boinc....Boinc....Boinc.... |

Gary Charpentier Gary Charpentier  Send message Joined: 25 Dec 00 Posts: 30687 Credit: 53,134,872 RAC: 32

|

I went and inspected the first page of my returned work today. It's all VLAR work. Was interested in how many times they have been issued. I was under the impression the outage and the 99% issuance of VLAR's was to force the people using VLAR killers to stop using them or not be able to get any work and they would quit out of frustration. IIRC Frank Drake proved that ET will only be found in a VLAR. ;)

|

|

Tony Li Send message Joined: 21 May 01 Posts: 6 Credit: 1,337,747 RAC: 0

|

Just FYI: Due to personal circumstances, I'm not able to participate on weekends. As a result, I'm effectively now only getting work two days a week. So far, this is going ok, but if I run out of work, I'm going to give my cycles to another project... |

SciManStev SciManStev  Send message Joined: 20 Jun 99 Posts: 6653 Credit: 121,090,076 RAC: 0

|

Just FYI: Due to personal circumstances, I'm not able to participate on weekends. As a result, I'm effectively now only getting work two days a week. No problem! By contributing your cycles, you are helping science in one way or another. Good luck with what ever you choose! :) Steve Warning, addicted to SETI crunching! Crunching as a member of GPU Users Group. GPUUG Website |

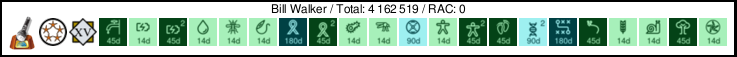

Bill Walker Bill Walker Send message Joined: 4 Sep 99 Posts: 3868 Credit: 2,697,267 RAC: 0

|

Just FYI: Due to personal circumstances, I'm not able to participate on weekends. As a result, I'm effectively now only getting work two days a week. I agree with Steve. BOINC will handle a range of workloads very nicely. I have one old machine, only on part time, that does about 2WU a week for S@H. It is quite happy to just sit there and crunch S@H, and CPDN, at its own pace. Every little bit helps.

|

MadMaC MadMaC Send message Joined: 4 Apr 01 Posts: 201 Credit: 47,158,217 RAC: 0

|

Just FYI: Due to personal circumstances, I'm not able to participate on weekends. As a result, I'm effectively now only getting work two days a week. I do MW@home in my spare time, but this is the only space based project that supports fermi at the moemnt as far as I know

|

kittyman  Send message Joined: 9 Jul 00 Posts: 51469 Credit: 1,018,363,574 RAC: 1,004

|

I would like to thank Jeff for his establishing communication with the rabble. Thank you, Jeff. "Freedom is just Chaos, with better lighting." Alan Dean Foster

|

|

Josef W. Segur Send message Joined: 30 Oct 99 Posts: 4504 Credit: 1,414,761 RAC: 0

|

I am starting the servers up. I'm not sure I agree with the logic, an AP task has about 23 times the bits of an S@H Enhanced task, but the estimated runtime may have a higher ratio. For many hosts, one AP WU fulfills the cache setting so they would stop requesting work. But counting an AP and "MB" task the same for the limits mechanism doesn't make sense. Once the server bug which is affecting counting for AP application versions is fixed, then it would make sense to have different limits for AP and "MB" applications. It's probably a moot point for this week anyhow. For next week it could be implemented fairly simply during the outage by having only mb_splitters active until S@H Enhanced "Results ready to send" grew to about 3/4 whatever target they had set, then enabling ap_splitters. The taskid sequence then would have a stretch of maybe 300000 with no AP tasks. Preceding that would be a mixed section left over from the Tuesday shutdown so some early requests would get AP, but when that 300000 sequence was reached only "MB" tasks would be going out for about 3 hours at peak rate. I believe the sequence may work out just as unevenly as last week, but the higher limits will mean those sections of the queue where there's very little AP work may stay at peak delivery rather than falling back to 50 Mb/s. From Scarecrow's graphs it appears AP "Results ready to send" has actually increased since the restart, while "MB" has decreased. That suggests the actual distribution was similar to what I suggested in the preceding paragraph. A reduction from 3 Feeder slots for AP v505 to only 2 might be a good change. 48:1 is a better approximation to the 40:1 splitting ratio than the current 32:1. It would make the AP splitters fall behind the mb_splitters when both are working on the same set of "tapes" rather than getting ahead as they do now. But the real advantage is that 96 or 97 "MB" WUs plus 2 AP WUs is about 427 Mbits and if the Feeder is running a 5 second cycle the download bandwidth would average 85.4 Mbits/second. If an even enough mix in the "Results ready to send" queue were possible so the Feeder were able to replenish all slots each cycle, that would be close to ideal. Joe |

|

Cosmic_Ocean Send message Joined: 23 Dec 00 Posts: 3027 Credit: 13,516,867 RAC: 13

|

I observe that the limit has been lifted (or increased) shortly after 6pm PDT. Linux laptop: record uptime: 1511d 20h 19m (ended due to the power brick giving-up) |

arkayn arkayn Send message Joined: 14 May 99 Posts: 4438 Credit: 55,006,323 RAC: 0

|

|

zoom3+1=4 zoom3+1=4 Send message Joined: 30 Nov 03 Posts: 65773 Credit: 55,293,173 RAC: 49

|

I observe that the limit has been lifted (or increased) shortly after 6pm PDT. Oh wow(rolls eyes), Just for My cpu, I'd need 19.2 WU's per logical CPU core, As My cpu crunches the WU's in 1:15:00, plus or minus a couple minutes or so. The T1 Trust, PRR T1 Class 4-4-4-4 #5550, 1 of America's First HST's

|

|

Cosmic_Ocean Send message Joined: 23 Dec 00 Posts: 3027 Credit: 13,516,867 RAC: 13

|

I was at the previous limit 5 per, 4 cores, and I managed to pick up 12 more, so I think it's more like 8 per CPU. Linux laptop: record uptime: 1511d 20h 19m (ended due to the power brick giving-up) |

JohnDK  Send message Joined: 28 May 00 Posts: 1222 Credit: 451,243,443 RAC: 1,127

|

And the DL servers are really struggling now, it took 22+ mins downloading a WU. One more now trying to DL, same thing + project backoff. |

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.