Hate scheduler (yes or no)

Message boards :

Number crunching :

Hate scheduler (yes or no)

Message board moderation

| Author | Message |

|---|---|

ksnash ksnash Send message Joined: 28 Nov 99 Posts: 402 Credit: 528,725 RAC: 0

|

Yes I hate it. Doesn't do anything useful and causes more problems. |

Keck_Komputers Keck_Komputers Send message Joined: 4 Jul 99 Posts: 1575 Credit: 4,152,111 RAC: 1

|

I lean towards liking it. It still has problems but it works better than any version since the 3.xx versions. BOINC WIKI   BOINCing since 2002/12/8 |

Daykay Daykay Send message Joined: 18 Dec 00 Posts: 647 Credit: 739,559 RAC: 0

|

I don't hate it. I just don't think it is quite ready to be declared a recommended version. Kolch - Crunching for the BOINC@Australia team since July 2004. Search for your own intelligence... |

The Gas Giant The Gas Giant Send message Joined: 22 Nov 01 Posts: 1904 Credit: 2,646,654 RAC: 0

|

TheGasGiant hates the new wu scheduler. It's been nothing but trouble for me since I installed the first dev version. If I have a cache of 4 days set and all the projects I'm attached to are running, then I expect BOINC to hold atleast 3 days of wu's cached. Live long and crunch! Paul. |

|

Astro Send message Joined: 16 Apr 02 Posts: 8026 Credit: 600,015 RAC: 0 |

I don't think it's been long enough to know for some people to know if they "Hate" it or not. Those with always on connections and higher speed computers should have an idea about how it works. Those with slow puters, intermittently connected, or higher "Connect to" settings haven't had enough time fairly see if the resource share is being honored. I'm one of the "Original" people who didn't think this is ready for "Primetime", and I still don't. I think a Poll would not be as accurate/fair if held at this time. I think you should wait for the weekend to have a poll. tony |

RDC RDC Send message Joined: 17 May 99 Posts: 544 Credit: 1,215,728 RAC: 0

|

I'm one of the "Original" people who didn't think this is ready for "Primetime", and I still don't. I think a Poll would not be as accurate/fair if held at this time. I think you should wait for the weekend to have a poll. I agree Tony. The 4.43 scheduler is finally starting to settle in on my system but still has a bit more to go. I'm now getting work more often and it's switching projects more often but until the scheduler gets settled in, I'm going to withdrawl my previous complaint(s) and go with a wait and see attitude until after a week of running. To express an I like/love it or I dislike/hate it opinion at this time would not be a true representation of the performance on my system as it seems like it will take about a week to get it settled into this computer. |

Thierry Van Driessche Thierry Van Driessche Send message Joined: 20 Aug 02 Posts: 3083 Credit: 150,096 RAC: 0

|

Running S@H together with E@H with 4.43. Result: 2005-05-25 01:48:09 [---] schedule_cpus: time 3600.078125 2005-05-25 01:48:09 [---] earliest deadline: 1117568031.000000 H1_0965.5__0965.9_0.1_T08_Fin1_1 2005-05-25 01:48:09 [---] earliest deadline: 1117568033.000000 H1_0965.5__0965.5_0.1_T09_Fin1_0 2005-05-25 02:48:09 [---] schedule_cpus: time 3600.015625 2005-05-25 02:48:09 [---] earliest deadline: 1117568031.000000 H1_0965.5__0965.9_0.1_T08_Fin1_1 2005-05-25 02:48:09 [---] earliest deadline: 1117568033.000000 H1_0965.5__0965.5_0.1_T09_Fin1_0 2005-05-25 03:48:09 [---] schedule_cpus: time 3600.062500 2005-05-25 03:48:09 [---] earliest deadline: 1117568031.000000 H1_0965.5__0965.9_0.1_T08_Fin1_1 2005-05-25 03:48:09 [---] earliest deadline: 1117568033.000000 H1_0965.5__0965.5_0.1_T09_Fin1_0 2005-05-25 04:48:09 [---] schedule_cpus: time 3600.078125 2005-05-25 04:48:09 [---] earliest deadline: 1117568031.000000 H1_0965.5__0965.9_0.1_T08_Fin1_1 2005-05-25 04:48:09 [---] earliest deadline: 1117568033.000000 H1_0965.5__0965.5_0.1_T09_Fin1_0 2005-05-25 05:48:09 [---] schedule_cpus: time 3600.000000 2005-05-25 05:48:09 [---] earliest deadline: 1117568031.000000 H1_0965.5__0965.9_0.1_T08_Fin1_1 2005-05-25 05:48:09 [---] earliest deadline: 1117568033.000000 H1_0965.5__0965.5_0.1_T09_Fin1_0 2005-05-25 06:48:09 [---] schedule_cpus: time 3600.015625 2005-05-25 06:48:09 [---] earliest deadline: 1117568031.000000 H1_0965.5__0965.9_0.1_T08_Fin1_1 2005-05-25 06:48:09 [---] earliest deadline: 1117568033.000000 H1_0965.5__0965.5_0.1_T09_Fin1_0 2005-05-25 07:48:09 [---] schedule_cpus: time 3600.046875 2005-05-25 07:48:09 [---] earliest deadline: 1117568031.000000 H1_0965.5__0965.9_0.1_T08_Fin1_1 2005-05-25 07:48:09 [---] earliest deadline: 1117568033.000000 H1_0965.5__0965.5_0.1_T09_Fin1_0 2005-05-25 08:48:09 [---] schedule_cpus: time 3600.014936 2005-05-25 08:48:09 [---] earliest deadline: 1117568031.000000 H1_0965.5__0965.9_0.1_T08_Fin1_1 2005-05-25 08:48:09 [---] earliest deadline: 1117568033.000000 H1_0965.5__0965.5_0.1_T09_Fin1_0 Conclusion? the S@H WU's will only crunch if their deadline is close to the one of E@H. What does it mean? Even with an equal resource share, Boinc will crunch E@H WU's and will forget S@H WU's for a while. Finally, crunching of S@H WU's will start 7 days before their deadline. (deadline Einstein=7, Seti=14 days) Result? A loss of 7 days crunching for S@H. If I'm wrong, please correct me. Meanwhile, I uninstalled 4.43 and went back to 4.30. |

ThePhantom86 ThePhantom86 Send message Joined: 30 Jan 02 Posts: 268 Credit: 1,970,082 RAC: 0

|

I'm not fond of it, that's for sure. Yesterday my main cruncher didn't have work for over 8 hours and it's attached to Seti, Einstein, Predictor, LHC, Pirates, and BURP. I finally got some wu's from Seti and Einstein. I'll let it run a few more days and if I still don't like it, back to 4.25 I go.

|

MikeSW17 MikeSW17 Send message Joined: 3 Apr 99 Posts: 1603 Credit: 2,700,523 RAC: 0

|

Hate It: No Love it: No It could be called Perferct... ...if you only run one project

|

|

1mp0£173 Send message Joined: 3 Apr 99 Posts: 8423 Credit: 356,897 RAC: 0

|

After much experimentation, running multiple projects, running short deadlines, running long deadlines and not making any assumptions until I've watched it run for days it is clearly a big improvement. On the other hand, if you are looking at a "snapshot" (like what's in the queue at a given instant) or watching it for a day when you have "connect every '3' days" in your settings, you'll hate it. I like it alot. |

KWSN - MajorKong KWSN - MajorKong Send message Joined: 5 Jan 00 Posts: 2892 Credit: 1,499,890 RAC: 0

|

When I first saw it work, I was not too fond of it. But, after I understood what it was doing and it had time to settle out, I find that I kinda like it. It is, in my opinion, a big improvement over the old one. https://youtu.be/iY57ErBkFFE #Texit Don't blame me, I voted for Johnson(L) in 2016. Truth is dangerous... especially when it challenges those in power. |

|

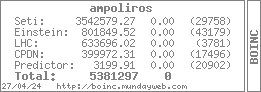

ampoliros Send message Joined: 24 Sep 99 Posts: 152 Credit: 3,542,579 RAC: 5

|

After much experimentation, running multiple projects, running short deadlines, running long deadlines and not making any assumptions until I've watched it run for days it is clearly a big improvement. I'd have to agree with Ned here. I've been running the alpha/beta versions of the CC on the majority of my machines. In single project scenarios, they work perfectly... no problems that I've seen (Windows or Linux, dial-up or always on). In multiple project scenarios, they will choke and wheeze after an upgrade as the scheduler tries to cope with either the new rules and/or old debts. But it always straightens things out in a week or less (about half the longest deadline in my experience). I think a lot of problems come from the fact that there are those who constantly mess with "connect to" settings, change clients, suspend/reconnect/reset and expect the BOINC CC to instantly react and smooth things out. (I'm not thinking of anyone specific here, don't be offended.) The BOINC CC was designed to work over long periods (just like the projects it manages). That's not to say there aren't legitimate problems. There have been those as well. Who among us has an extra CPDN unit to their name? :) Cache size management has been on ongoing struggle on both sides. Should cache size refer to each project? (3 days SETI, 3 days LHC, and 3 days Einstein; 9 days total) Or should it refer to totals as it does now? (3 days for SETI, LHC and Einstein total). What happens if I have a CPDN unit? Will I keep zero chache of all others because it takes more than 10 days to complete? Then there have been those that set generals for every project not realizing that there is only one and every change rewrites the last and that "resource share" is what they want. Then there's resource share, which is confusing because it doesn't use percentages as you might first assume; it can't because of the cross project nature. The source of all this may be that there is no official documentation on any of this. Outside Paul's documentation there is nothing. And unless you visit the forums on a regular basis, you'd never know about Paul's Docs. All BOINC says is "download and run, attach to project." This may be partially compounded by the fact that in the past month there has been at least one new BOINC CC every week. I really don't think that the developers are allowing the new CC(s) to go through a real testing phase. From my perspective they assume that most of the problems are the result of their program and implement fixes that may not be nescessary. I very well might be wrong here, but I don't think a new alpha/beta should be released more often than once per month. And it doesn't help that most of us don't know how to be "real" beta-testers. Idealy, a beta-tester should know the full "ins-and-outs" of the system they are testing on and be able to suggest real fixes to any problems they see. (See recent post on soft page faults.) Now I know that isn't really possible, but it would be nice. ;) At the very least every reported problem should be accompanied by a full description of what was involved. Too often I have seen reports that just say "This isn't working." There, I'm done. I've gotten it all off my chest. Oh, and don't bother answering the questions they are either rhetorical or used as an example.:)  7,049 S@H Classic Credits |

|

Ledo Send message Joined: 4 Jul 99 Posts: 55 Credit: 918,136 RAC: 0

|

Personally I don't like the new scheduler. I haven't the enough patience to give it a chance to show its important improvements! For me the old scheduler works fine for me and i don't need better, because i hava an apropriate cache for my PC and don´t miss deadlines (except if there is any problem). I have downgrade to 4.25 for two reasons: 1. Seti wasn't getting new units. I know now that because the changes made on the scheduler, it needs more than a day to settle down and work like the old one (Keeping enough units in cache to meet the value established by me) 2. After the upgrade the scheduler download a new CPDN unit. For me seemed logical, because if one takes 50% cpu cycles and the rest goes to Seti, why don't run two at the same time instead! I thought that was an improvement but i know now that is a bug! But the thing that made my mind to downgrade, was the fact that after i restarted my PC and started the client, it download a new CPDN unit (1 unit being crunched and 2 ready to crunch!) That was the last drop and i downgraded, because i don't want to be a CPDN units colector! (I have a 80% Seti and 20 % CPDN) Altough the new changes at this stage seems litle usefull to me and 4.25 is sufficient for me, I would like to CONGRATULATE the DEVS for the efforts to make the client most suitable possible for the generally of the users and keep up the excellent but hard work! <img src="http://boinc.mundayweb.com/one/stats.php?userID=1331"> |

|

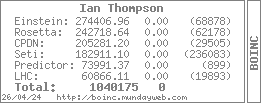

Ian Thompson Send message Joined: 3 Jan 04 Posts: 35 Credit: 182,911 RAC: 0

|

Hey who said it was scheduler. Its a dictator isn't?

|

|

hallen Send message Joined: 12 Jan 02 Posts: 13 Credit: 226,506 RAC: 0

|

As long as the scheduler ignores a client that is sitting idle, lacking work, the scheduler is a failure. You can tell the scheduler is developed by academics. All the convoluted elegance ignores the simple fact that a computer resource attached to the DISTRIBUTED SYSTEM is not being utilized. That is failure. The ultimate goal of a DISTRIBUTED SYSTEM is to keep all resources attached to it operating at maximum efficiency. I doubt this current crew has the ability to achieve this. I hate waste, but for them, 95%, 96% is good enough. They weren't very good at SETI Classic either. Without 3rd party programs like SetiDriver and SetiQueue, SETI would have been a flop. <br><img src="http://boinc.mundayweb.com/one/stats.php/userID:1726/prj:1"> |

Paul D. Buck Paul D. Buck Send message Joined: 19 Jul 00 Posts: 3898 Credit: 1,158,042 RAC: 0

|

There *IS* an "official" site but, in my opinion, it is not as good as it could be. This is the developer site at: http://boinc.berkeley.edu/ and it talks to the developers. Dr. Anderson and I have had some go-rounds on this site and its lacks (again in *MY* opinion) and the fact that my site does suffer from being "outside" the development process. Don't get me wrong, Dr. Anderson and I have been trying to get on the same page about documentation and we may be closer to a possible solution. I am working on that right now and I hope I can make it work for all of us. |

|

Heffed Send message Joined: 19 Mar 02 Posts: 1856 Credit: 40,736 RAC: 0

|

I'm still waiting to give a final answer because I'm hoping some tweaks will be made. Such as allowing users to top off their cache. (within reason of course) Currently it sucks for modem users. If fixes aren't made, then yes, I hate it and will stop using BOINC.

|

|

Samantha Carter Send message Joined: 23 May 99 Posts: 4 Credit: 13,221 RAC: 0

|

"I think a lot of problems come from the fact that there are those who constantly mess with "connect to" settings, change clients, suspend/reconnect/reset and expect the BOINC CC to instantly react and smooth things out. (I'm not thinking of anyone specific here, don't be offended.) The BOINC CC was designed to work over long periods (just like the projects it manages)." I'm not offended, but I do want to explain why I end up tinkering so much. Firstly, BOINC seems to make the assumption that BOINC projects are the only projects that I'll want to run. This is not true. I have several other projects that I run, and I have a rotating, specific schedule of when I run them. BOINC normally runs for four days (because I currently have four projects attached), then I run through my other projects (which run a day apiece), and then I cycle back to BOINC and repeat the process. So yes, every few days I do end up suspending, moving on to something else, and coming back to BOINC. I did let the new version run for several days, and as I stated in a previous thread, it only processed my one ClimatePrediction workunit. Nothing else would download until late yesterday, when it decided to download two new, massive, ClimatePrediction workunits. All other projects were attached but simply refused to download any units, even when I tried suspending all other projects and only letting a particular one have the machine. Up until recently, I was also running a MMORPG that had lag issues unless I completely shut down BOINC. BOINC was the only project that seemed to really have an issue with it, so when I was playing that game for six, seven, eight hours a day I had no choice but to suspend BOINC because it refused to use anything less than 50% of my processors at all times even on the lowest priority settings (which is the default). Why can't we simply say, "Give me a workunit from x project" and "Process x workunit for x hours"? Is this too difficult? This reminds me of a script one of my friends wrote for a server I run that takes into account how much bandwidth is being used over time and initiates a new send if the server is not using the minimum amount I specified. Unfortunately, he coded it in such an over-complicated way that it would take hours sometimes for the script to realize I wasn't utilizing my bandwidth fully and I would be 20-30kb/s under my minimum requirements for those hours. I finally had to edit the script myself and change one of the multipliers to make it function in the way I intended. Modifying that made my output practically double each day. So, with that, I say to the development team: What good are idle processors? If people continue to find their processors idle, they will simply move on to other projects. I understand not giving a client a workunit if there are no workunits available, but there were definitely workunits available for the projects I couldn't get any from, the scheduler just refused to give them to me. And that, I believe, is truly a failure. |

|

Pascal, K G Send message Joined: 3 Apr 99 Posts: 2343 Credit: 150,491 RAC: 0

|

I like 4.43, basically you need to just give it time and it seems to work out fine. To bad or it may be a good thing that the projects are having problems now, cause I feel it is showing some of the weaknesses of the CC, but this allows John and the other Devs to get a better handle on the bugs. You got to remember, this new fangled program is still a baby and it is learning to crawl and soon it will be walking and then it will be running a muck and all will be well with the universe lolololol Semper Eadem So long Paul, it has been a hell of a ride. Park your ego's, fire up the computers, Science YES, Credits No. |

Paul D. Buck Paul D. Buck Send message Joined: 19 Jul 00 Posts: 3898 Credit: 1,158,042 RAC: 0

|

Samantha, And that is why the developers are working so hard on these issues. For the most part we are seeing a solid amount of progress each week. I know most people don't have the time to spend "bird-dogging" this stuff and so, I do. I watch the various mailing lists and also try to get good bug reports into the bugbase. In an average day I will see from 10-20 changes to the CVS (one of the mailing lists) and there have been quite a few fixes to the WOrk Scheduler which should be compiled into the 4.44 version. The back end is a little more problamatical, and one of the issues you allude to in your post was caused by some hand-shaking problems between the clients and the servers. All of this effort is to work on problems that in some cases have been with us for a year or more, but there was not time to make the changes as there were not enough developers available. For the new Work Scheduler we would not be seeing that if John McLeod VII had not done the work. |

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.