Going from GTX 470 to 500/600 series what model is best?

Message boards :

Number crunching :

Going from GTX 470 to 500/600 series what model is best?

Message board moderation

Previous · 1 · 2 · 3 · 4 · Next

| Author | Message |

|---|---|

|

Speedy Send message Joined: 26 Jun 04 Posts: 1643 Credit: 12,921,799 RAC: 89

|

OK thanks for details. I guess the question should be asked: What card gives the most RAC for the least power output? Rig runs round 12 hours a day With a Core 2 Duo over clocked to 4.2GHz That is an impressive over clock you have. Is the core 2 duo bring called by air and what is the temperature of the room that your computer is in?

|

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

I don't know where you got the idea that a GTX 660 is anywhere close to the speed of your GTX 570, but that's not even close to being right. THE FOLLOWING NUMBERS ARE FLAWED, BUT THEY AREN'T WRONG. Not every card is "competing" under the same conditions. I just did a fast, down-and-dirty, run of Fred's Optimization Tool at 3 work units per card at once. I couldn't get it to run on my triple 560 machine, a problem I'll look into when I have time. Below: Device 0 = NVIDIA reference GTX 660Ti Device 1 = NVIDIA reference GTX 660Ti Device 2 = 02G-P4-2670-KR EVGA GeForce GTX 670 Starting test: (x41g) 16 December 2012 - 03:26:36 Start, devices: 3, device count: 3 (0.33) --------------------------------------------------------------------------- Results: Device: 0, device count: 3, average time / count: 475, average time on device: 158 Seconds (2 Minutes, 38 Seconds) Device: 1, device count: 3, average time / count: 477, average time on device: 159 Seconds (2 Minutes, 39 Seconds) Device: 2, device count: 3, average time / count: 401, average time on device: 133 Seconds (2 Minutes, 13 Seconds) In the above example the 670 is a reference clocked card in a x4 PCIe slot, probably held-back a little by that. Below: Device 0 = EVGA 012-P3-2078-KR EVGA GeForce GTX 560 Ti 448 Cores Classified Ultra Starting test: (x41g) 16 December 2012 - 03:29:46 Start, devices: 1, device count: 3 (0.33) --------------------------------------------------------------------------- Results: Device: 0, device count: 3, average time / count: 353, average time on device: 117 Seconds (1 Minutes, 57 Seconds) Your GTX 570 is faster than my 560Ti-448. My 560Ti-448 is more than 35% faster than my GTX 660Ti which in turn will be faster than a GTX 660. Just for additional comparison: Below: Device 0 & 1 NVIDIA reference 560Ti Starting test: (x41g) 16 December 2012 - 04:47:33 Start, devices: 2, device count: 3 (0.33) --------------------------------------------------------------------------- Results: Device: 0, device count: 3, average time / count: 403, average time on device: 134 Seconds (2 Minutes, 14 Seconds) Device: 1, device count: 3, average time / count: 407, average time on device: 135 Seconds (2 Minutes, 15 Seconds) My reference 560Tis are faster than my reference 660Ti by almost 18%. The 600-Series cards are closest to crunching SETI like their "one step down" model in the 500-series. The GTX 660Ti is "close" to a GTX 560 (no Ti) The GTX 670 is "close" to a GTX 560Ti (although the 670 does seem faster than these numbers are showing) One more thing as a "sanity check": Below: Devices 0 & 1 EVGA 012-P3-1470-RX EVGA GeForce GTX 470 Starting test: (x41g) 16 December 2012 - 03:31:36 Start, devices: 2, device count: 3 (0.33) --------------------------------------------------------------------------- Results: Device: 0, device count: 3, average time / count: 442, average time on device: 147 Seconds (2 Minutes, 27 Seconds) Device: 1, device count: 3, average time / count: 448, average time on device: 149 Seconds (2 Minutes, 29 Seconds) A GTX 470 is FASTER than a GTX 660Ti which is faster than a GTX 660. THIS is an example of a comparison that will tighten-up, if not flip-flop, due to the updated x41z application. Maybe. But under no conditions (that are possible today) will a GTX 660Ti meaningfully beat (if equal) a GTX 470's output. I'm going to be doing some testing of all of these cards under a single set of test conditions in the very near future. I ordered the motherboard and RAM Saturday. You won't get exactly the same times I will, but all of the cards will be operating with the same limitations (like a PCIe 2.0 bus, I went AMD again). I think everyone is over-estimating the crunching power of the 600-series based on game benchmarks which do not translate well to crunching. YES, the 600-series show more increase in performance using the x41z applications than the 500-series (and 400-series) do. The improvement does not leapfrog the 600-series ahead of the 500-series, however, and certainly not for the price. A 660Ti, even a SC model, is no match for a 560Ti, and is barely a match for a GTX 560 (no Ti)... ...with the understanding that my reference is "AT CRUNCHING FOR SETI WITH THE APPLICATIONS WE HAVE AT THIS TIME." For all I know, a GTX 660 might be "just the ticket" at some other project, or it might even be a better choice for SETI this time next year. Who knows? I certainly don't. But these gaming benchmarks are really not translating well to crunching-power.

|

Cliff Harding Cliff Harding Send message Joined: 18 Aug 99 Posts: 1432 Credit: 110,967,840 RAC: 67

|

The MSI N680GTX Lightning GeForce GTX 680 2GB might be a bad idea even in a (full tower) HAF-932 case. From what I've seen the card has 2 fans, which means that the card does not have an external exhaust. The heat stays inside the case until it reaches the top exhaust fan. Unless you are liquid cooling the CPU, that heat will come in contact with the fins of the CPU air cooler. The 240mm side panel fan on the HAF-32 works extremely well for cooling graphics cards. Both of my machines are in the HAF-32, both have dual external exhaust cards with temps below 65c. The HAF-32 comes with 2 x 240mm incoming fans (front & side) and 1 x 240mm at the top for exhaust. I think that changing the air flow direction (to exhaust) of the side panel fan will disrupt the front fan airflow thus having higher internal case temps.   I don't buy computers, I build them!! |

|

Team kizb Send message Joined: 8 Mar 01 Posts: 219 Credit: 3,709,162 RAC: 0

|

Great information tbret, thanks for the data on the GTX 470. Looks like based on your data running my 3 470's @ 0.33 from a RAC/cost standpoint they would still put up some strong numbers compared to selling them and buy 600 series cards. My Computers: â–ˆ Blue Offline â–ˆ Green Offline â–ˆ Red Offline |

W-K 666  Send message Joined: 18 May 99 Posts: 19064 Credit: 40,757,560 RAC: 67

|

Great information tbret, thanks for the data on the GTX 470. Looks like based on your data running my 3 470's @ 0.33 from a RAC/cost standpoint they would still put up some strong numbers compared to selling them and buy 600 series cards. But you need to factor in the power consumption, the GTX470 uses about 100W more than the GTX670 at full load. No idea what your energy costs/kWh, but at 20 cents(US) per hour that would be approaching $200/year/card running 24/7. |

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

There's all kinds of things you need to factor-in when you are choosing a card or cards. For instance, I don't play games. These are strictly crunchers. If I were worried about playing games, I would probably not have bought the 470s. In my case I bought the 470s as refurb units from EVGA and paid $119 for them. The 660Tis were (at the time) $350 each. I can run the 470s 24/7 for a long time before I chew-up the $460 difference in cost. But if I had needed a new $200 power supply to run the 470s, but I could have run the 660Tis with the one I already owned, I probably wouldn't have bought the 470s (they wouldn't have been as cheap to put to work). The 560Ti-448 was $149.00 after rebate. I could buy two for less than the price of one 660Ti. My point being: Everyone needs to figure-in their own set of circumstances and decide for themselves what is best for them to do. But to be able to do that they have to have at least a ballpark idea of what the crunching power of one card compared to another card really is. To calculate "pay-back" or "break-even" or "total cost of ownership" you have to know all kinds of things that a test of crunching-power can't tell you. 1) Will my existing hardware support it? 2) Can I cool it? 3) What is the purchase price? 4) What is my cost of electricity? 5) How long to I plan to own it? 6) Do I play games and if-so, what games? 7) Will I overclock the card? (changes power consumption assumptions) ...etc. We can't even start the calculation if we don't know the crunching speed of the cards.

|

zoom3+1=4 zoom3+1=4 Send message Joined: 30 Nov 03 Posts: 65750 Credit: 55,293,173 RAC: 49

|

I have 4 120mm Koolance 108cfm fans in the panel, instead of the 230mm fan, in the top I have 2 of the same 120mm fans with a place for a 3rd, I just don't have the Y cable yet. My GTX590 is at 72C right now as it's crunching and this is on air, I'm not sure how 2 or 3 would do temp wise, I just know they'd be warmer, but I have plans that deal in water, so 2 on air will be for 1 month only, as I want to see how much an extra card will cost Me per month on My bill. The T1 Trust, PRR T1 Class 4-4-4-4 #5550, 1 of America's First HST's

|

|

Speedy Send message Joined: 26 Jun 04 Posts: 1643 Credit: 12,921,799 RAC: 89

|

Please find answers to the above questions of above and the quoted text. I ran breeds optimisation application version 1.7 it said the optimal number of tasks for my 470 was three units at a time. At present I'm going to run my card at one new unit at a time because I will not be here throughout the day to monitor the temperature. I ran a card with three units for about three minutes or so and the card temperature was around 85° and fan speed was on automatic it was over 3000 rpm the fan sounded like a jet engine I could hear it over the top of the CPU fan. Please see the results from Fred's optimised application below. Starting automatic test: (x41g) 17 December 2012 - 09:10:08 Start, devices: 1, device count: 1 (1.00) --------------------------------------------------------------------------- Results: Device: 0, device count: 1, average time / count: 167, average time on device: 167 Seconds (2 Minutes, 47 Seconds) Next :--------------------------------------------------------------------------- 17 December 2012 - 09:12:57 Start, devices: 1, device count: 2 (0.50) --------------------------------------------------------------------------- Results: Device: 0, device count: 2, average time / count: 278, average time on device: 139 Seconds (2 Minutes, 19 Seconds) Next :--------------------------------------------------------------------------- 17 December 2012 - 09:17:37 Start, devices: 1, device count: 3 (0.33) --------------------------------------------------------------------------- Results: Device: 0, device count: 3, average time / count: 407, average time on device: 135 Seconds (2 Minutes, 15 Seconds) Next :--------------------------------------------------------------------------- 17 December 2012 - 09:24:29 Start, devices: 1, device count: 4 (0.25) --------------------------------------------------------------------------- Results: Device: 0, device count: 4, average time / count: 543, average time on device: 135 Seconds (2 Minutes, 15 Seconds) >> The best average time found: 135 Seconds (2 Minutes, 15 Seconds), with count: 0.33 (3)

|

Snowmain Snowmain Send message Joined: 17 Nov 05 Posts: 75 Credit: 30,681,449 RAC: 83

|

I don't know where you got the idea that a GTX 660 is anywhere close to the speed of your GTX 570, but that's not even close to being right. I pulled the number from memory, apparently I remeber wrong. None the less the chart in my next post address's the $per crunch questions. ------------------------------------------------------------------------ OK thanks for details. I guess the question should be asked: What card gives the most RAC for the least power output? Rig runs round 12 hours a day I have removed this processor from my machine and replaced it with a quad core. It was an E8500 connected to a ZALMAN 9500led. Yup a 3.13GHz chip on air(ruffle 67 degree F ambient) running at 4.2GHz. 1.4v with the Zalman it would run around 60C. The chip and cooler are for sale. Unfortunately the quad wont go above 3.5GHz regardless of what I do to it. A little disappointing.

|

Snowmain Snowmain Send message Joined: 17 Nov 05 Posts: 75 Credit: 30,681,449 RAC: 83

|

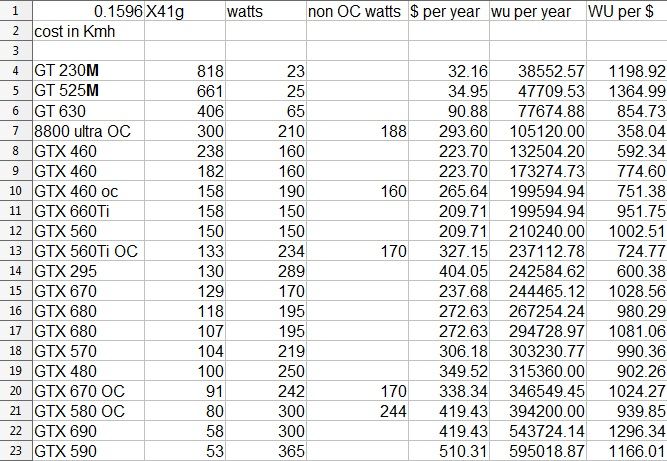

My OC GTX570classy pulled a 95 seconded on this test. It could easily be pushed another 150MHz with some volts. But I left the voltage stock. My OC is light. I run 3 units at once 64% fan 67degree F ambiant card @ 59C 99% load. I think this chart really gets down to the nitty grity on this subject. 990 vs 1028 thats not even a 4% increase in efficence. Unfortunaly I didn't have the GTX 660 when I made this chart. Hmm I wonder if the standard 660 would be north or south of that 1000 Wu per watt #? It is interesting how close most of these cards are. |

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

Now you've put into a chart what I've been unhappy with NVIDIA about since I got my first 670. The numbering of the models is deceptive *because* we "tend" to compare a 670 with a 570 believing the "70"-part number is telling us something. With all of the pressure these companies face to make their products work on air, it is no wonder they try to make it look as though their new products are so much more efficient than their old. And frankly, for game-playing, I think some of the new technology and features may be working to reduce power-consumption. For us crunchers? Not-so-much.

You should stick the "normal" (read: reference) 560Ti numbers in there from this thread. The only number I doubt (I don't doubt your calculations, I doubt the comparison is valid) is the greater efficiency you're showing with the over-clocked GTX 670. If you pulled those from Fred's chart, I think Jason was using a CUDA version that was different than the one that tested the non-over-clocked 670. I suspect, strongly, that the efficiency gain we see there is due to the applications. I can't think of another example of anything, CPU or GPU, that gets more efficient as it is over-clocked and more energy goes to waste-heat.

|

Snowmain Snowmain Send message Joined: 17 Nov 05 Posts: 75 Credit: 30,681,449 RAC: 83

|

The OC 670 drops 4 wu per $ of power... That is to say it's not as efficient according to the chart. No change needed. But to the driver question look at the 2 680's......major increase in performance from 2 similar cards.

|

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

The OC 670 drops 4 wu per $ of power... That is to say it's not as efficient according to the chart. No change needed. You're right, I read it backwards for some weird reason. EDIT: In fact, I'll go so far as to say, "Hey! That was stupid of me! I apologize!"

|

W-K 666  Send message Joined: 18 May 99 Posts: 19064 Credit: 40,757,560 RAC: 67

|

I think some of the new technology and features may be working to reduce power-consumption. That is exactly what all the new technology is about. Whether it be GPU's or CPU's the speeds have reached limits that cannot go any faster unless the heat, read power is reduced. Stock CPU frequencies have no risen since the days of the P4 so to do more wore they have reduced power and then increased the number of cores. And without a major, major redesign of the motherboard and case then the stock cooling of GPU's restricts the max performance of the GPU, so again they are going for heat reduction. This includes removing parts in the GPU that don't affect the games players. i.e. remove some of the physics processing that Seti likes. I am happy with my GTX 670's, fitted to three different computers, two on my account, one on youngest sons, they perform as expected and didn't require any other changes, like bigger power supplies. |

Snowmain Snowmain Send message Joined: 17 Nov 05 Posts: 75 Credit: 30,681,449 RAC: 83

|

No biggie. I wonder if the results of these test bare out in the real world. The 590 and 690 own! To bad they cost so much.

|

zoom3+1=4 zoom3+1=4 Send message Joined: 30 Nov 03 Posts: 65750 Credit: 55,293,173 RAC: 49

|

Snowmain, um what's involved in the cost per year? Is cooling for the house in there? And what rate is electricity calculated at? I think the chart is a nice idea, but My last bill for the whole house minus any real cooling, was $40.44 and I have one GTX590, cooling the house would add another $20.00 for My current setup, if I had to add a 12000btu energy star room air conditioner(good enough for 700sqft, where I live is 840, but 1 bedroom is not cooled now anyway, so 700 is good enough), I don't know what it would cost Me really, the one I looked at said $88.80 a year, but like the pic says that will vary with ones electric rates that one pays.  The T1 Trust, PRR T1 Class 4-4-4-4 #5550, 1 of America's First HST's

|

|

Speedy Send message Joined: 26 Jun 04 Posts: 1643 Credit: 12,921,799 RAC: 89

|

Snowmain, thank you for the chart very interesting indeed. For your 600 series cards were they running them in a PCIE 3 or 2 slot?

|

|

Grant (SSSF) Send message Joined: 19 Aug 99 Posts: 13736 Credit: 208,696,464 RAC: 304

|

For your 600 series cards were they running them in a PCIE 3 or 2 slot? It wouldn't make any difference. Grant Darwin NT |

Snowmain Snowmain Send message Joined: 17 Nov 05 Posts: 75 Credit: 30,681,449 RAC: 83

|

And what rate is electricity calculated at? Says it right there on te chart $.1596 per kilowatt hour. Which it my entire power bill divided by the KW/H.

|

zoom3+1=4 zoom3+1=4 Send message Joined: 30 Nov 03 Posts: 65750 Credit: 55,293,173 RAC: 49

|

Snowmain, please don't get Me wrong, I was just asking and since You did answer My question, I Thank You. Oh and My rate per Kwh is roughly $0.0533, as that's a composite of the sources the park pulls electricity from. The T1 Trust, PRR T1 Class 4-4-4-4 #5550, 1 of America's First HST's

|

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.