Just another GPU value chart

Message boards :

Number crunching :

Just another GPU value chart

Message board moderation

| Author | Message |

|---|---|

shizaru shizaru Send message Joined: 14 Jun 04 Posts: 1130 Credit: 1,967,904 RAC: 0

|

Ok, disclaimers: Yada yada YMMV yada yada prices are subject to change yada yada not a reflection of RAC yada yada batteries not included yada yada and all the other stuff you guys already know far better than I. Most info from Seti’s 3rd most important bookmark http://en.wikipedia.org/wiki/Comparison_of_Nvidia_graphics_processing_units #1 being the NC forum and #2 our mascot The Cricket:) First number (after the GPU number) is GFLOPS. Last number is the whole point of this little exercise and is GFLOPS per US dollar. Prices are conservative (especially for the low end cards) but realistic. GeForce GT 610 155.5 $40 - 3.8875 GeForce GT 620 268.8 $50 - 5.376 GeForce GT 630 311.0 $60 - 5.183 (DDR3) GeForce GT 640 691.2 $90 - 7.68 (DDR3) GeForce GTX 650 812.5 $100 - 8.125 GeForce GTX 650 Ti 1420.8 $150 - 9.472 GeForce GTX 660 1881.6 $230 - 8.181 GeForce GTX 660 Ti 2460 $290 - 8.483 GeForce GTX 670 2460 $370 - 6.648 GeForce GTX 680 3090.4 $460 - 6.718 GeForce GTX 690 2× 2810.88 (5621.76) $1000 - 5.62176 (ouch) What about Seti’s favourite workhorse, the 560 TI? (You know… when it’s not spitting out errors faster than Kerouac on Benzedrine) GeForce GTX 560 Ti 1263.4 $210 - 6.016 What’s that you say? You aren’t getting free electricity? Fair enough … Let’s pretend that it costs you $1.2 per watt per year running 24/7. And that you’ll run the card for 3 years. What then? GeForce GT 610 155.5 $40 + $104.4 – 1.077 GeForce GT 620 268.8 $50 + $176.4 – 1.187 GeForce GT 630 311.0 $60 + $234 – 1.058 GeForce GT 640 691.2 $90 + $234 – 2.133 GeForce GTX 650 812.5 $100 + $230.4 – 2.459 GeForce GTX 650 Ti 1420.8 $150 + $396 – 2.602 GeForce GTX 660 1881.6 $230 + $504 – 2.563 GeForce GTX 660 Ti 2460 $290 + $540 – 2.964 GeForce GTX 670 2460 $370 + $612 – 2.505 GeForce GTX 680 3090.4 $460 + $702 – 2.660 GeForce GTX 690 5621.76 $1000 + $1080 – 2.703 Again last number is GFLOPS per dollar. Higher is better. Second $ amount is cost of electricity running 24/7 for 3 years. And let’s not forget: GeForce GTX 560 Ti 1263.4 $210 + $612 – 1.537 (ouch) PS As Murphy would have it, I began to write this right before the power outage and tried to preview it during the outage. Many verbs and nouns followed, none of which suitable for the Boards I’m afraid. Just please show some leniency if I made any mistakes. |

|

Team kizb Send message Joined: 8 Mar 01 Posts: 219 Credit: 3,709,162 RAC: 0

|

|

zoom3+1=4 zoom3+1=4 Send message Joined: 30 Nov 03 Posts: 65755 Credit: 55,293,173 RAC: 49

|

For Me it's this monster... GeForce GTX 590 2488.3 $699 - 3.56 Now I don't know if You used the dollar amount listed on the wiki or not, but that's what I did, rounded up since it was so close. Now I don't know how much the PC uses, but My last bill was foe $40.44 and the average cost per Kwh was about $0.0502266666666667 and I used 434.0 Kwh last month for the whole house and yeah that's what My bill shows, not like I can do anything about that or that I'd want to, I'd be nuts if I did. The T1 Trust, PRR T1 Class 4-4-4-4 #5550, 1 of America's First HST's

|

shizaru shizaru Send message Joined: 14 Jun 04 Posts: 1130 Credit: 1,967,904 RAC: 0

|

Very interesting read, thanks for putting it together. So If I understand it correctly a 690 doesn't give you the best value, a 650 Ti wins? Here's the way I'd look at it... For casual crunchers refer to the first chart. For 24/7 crunchers refer to the second chart. So I'm guessing for most people here in the forums, the second chart is the way to go:) In the second chart the 660 TI wins... Edit: @VW You are very very lucky:) http://en.wikipedia.org/wiki/Electricity_pricing#Global_electricity_price_comparison |

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

Ok, disclaimers: Here's the problem: The GFLOPS numbers are NOT a reflection of SETI production. I know that sucks, but it's true. So saying "not a reflection of RAC yada yada," is necessary and true and can't be stressed enough. It makes the comparison deceptive even if you didn't intend to deceive anybody. I own four 660Tis and six 560Tis and I can tell you that the 560Tis are faster at **SETI** number crunching despite the 660Tis greater price and twice as much RAM. The TDP numbers are likewise deceptive if you're going to throw the 6xx into "boost" clock speeds or overclock them like all the utilities are designed to let you do. Your comparisons are great, it's just that the "benchmark" isn't useful as a proxy for crunching SETI, so why compare this way? The 660Ti is not quite the equal of a 560 (I own three) despite certain people's insistence that their shiny new GPU is superior to someone else's one year old "vintage" GPU. The 600-series ARE better... at playing new games. The only reasonable basis for crunching comparison would be something like Fred's tool. Ok, so it isn't perfect, and ok, so we don't have a perfect list of every GPU model, but look here anyway: FRED'S LIST What I see on that list is pretty doggone reflective of what I see in daily use, and we all know that the TDP figures are the worst kind of misinformation for OC, SC, SCC, Ultra, and Classified cards as the power consumption goes-up geometrically as clock speeds are increased. Of course, I'm assuming that the cards on that list that I don't own are also reflective of what their owners see in real life use. It looks like to put this whole debate to bed I'm going to have to build a "standard machine" and put every card I own in it, with a kill-a-watt meter on the system, and use Fred's tool (or something) to get a meaningful comparison that everyone (except for those *convinced* of something already; they can't be helped) can agree is a meaningful comparison. The problem is that I'm not going to buy a 480,580,680,590, or 690 to make the comparison. I can only do 460, 470, 560, 560Ti, 560Ti-448, 660Ti, and 670 comparisons and I can't do any of it until sometime in January. Should I even bother, or do you all think it would just lead to another argument? What would you say is an acceptable *MINIMUM* processor, board, PSU, & RAM to remove those arguments? I don't want to take the time to do it if nobody is going to believe the results. EDIT: The information I can provide by doing that is already pretty accurately reflected in Fred's list with the exception of power consumption. Is it worth the time, money, and effort? DOUBLE EDIT: And then what version of the application do I use?

|

dancer42 dancer42 Send message Joined: 2 Jun 02 Posts: 455 Credit: 2,422,890 RAC: 1

|

AMD RELEASED NEW ACCELORATED STREAMING SDK 2.8 if it doubles speed of ati cards, nvidia may have to up the bar agin. you may have to rechart! LOL |

|

Claggy Send message Joined: 5 Jul 99 Posts: 4654 Credit: 47,537,079 RAC: 4

|

AMD RELEASED NEW ACCELORATED STREAMING SDK 2.8 It doubles speed of some benchmarks, not doubles speed overall, If you can open your front door twice the speed as before, but you walk at the same speed through the door, and close it at the same speed as before, you won't have doubled performance at entering your house, Claggy |

|

David S Send message Joined: 4 Oct 99 Posts: 18352 Credit: 27,761,924 RAC: 12

|

Ok, disclaimers: Thanks for reminding me of that. I own four 660Tis and six 560Tis and I can tell you that the 560Tis are faster at **SETI** number crunching despite the 660Tis greater price and twice as much RAM. The TDP numbers are likewise deceptive if you're going to throw the 6xx into "boost" clock speeds or overclock them like all the utilities are designed to let you do. Sounds interesting, but I guess I'd have to see your report of your methodology to say whether I'd believe it. I think I'd suggest running Boinc on the GPUs only to take any CPU WU variances out of the mix. But you probably know how to do this better than I do. David Sitting on my butt while others boldly go, Waiting for a message from a small furry creature from Alpha Centauri. |

shizaru shizaru Send message Joined: 14 Jun 04 Posts: 1130 Credit: 1,967,904 RAC: 0

|

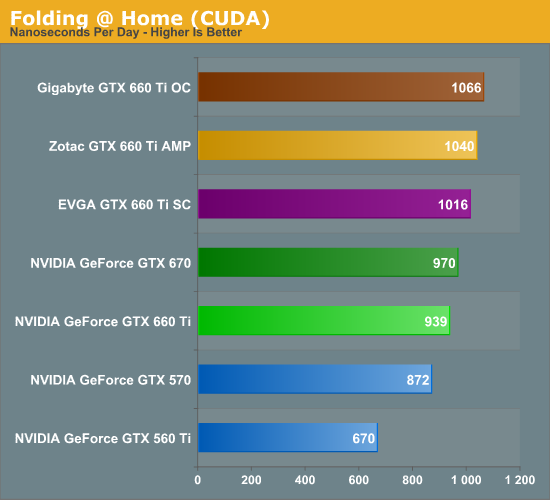

@tbret Like I said, you guys know this stuff far better than I do. Also, numbers are just numbers and no two people looking at the above charts (or any chart) will draw the same conclusions. Most people won't take ANY part of these into account. Yay for human diversity:) [/philosophy] Please don't take this the wrong way. I think you started off with good data (RAC) but then threw in some bad data (Watts) and drew the wrong conclusion on the 660 TI vs 560 TI. I'll try to explain: So the two cards are nowhere near their GFLOPS at SETI. You say their RAC is close @Seti with the 560 being slightly better. I don't doubt it's true but I do wonder why that is. For example:  Now most people will rush to blame the (Seti/Lunatics) apps, but before you guys do, riddle me this: If a 670 has an RAC of ~33k and a 560 TI has an RAC of ~26k, why isn't a 660 TI producing ~33k? It's not the apps, so what is it? As to your conclusion tbret here is were I disagree: On average a 560 TI is 10-15W more than a 660 TI. But under load it's actually the 560 TI that's all over the place. It can be anywhere from 40 to 70 Watts more in stress tests. http://www.techpowerup.com/reviews/MSI/GTX_660_Ti_Power_Edition/26.html http://www.techpowerup.com/reviews/ASUS/GeForce_GTX_660_Ti_Direct_Cu_II/26.html http://www.techpowerup.com/reviews/Zotac/GeForce_GTX_660_Ti_Amp_Edition/26.html That's an extra ~$150 over a 3 year period. But ignore that for a moment and have another look at the above Watts and costs in the second chart. Both GPUs cost ~$800 over a 3 year period. That looks like a tie to me, if both cards are similar in RAC. How did you come to the conclusion that the 560 TI is better? Have I missed something? As for the benchmarks you want to do (especially since you threw in the kill-a-watt) I think that is a fantastic idea:) And long overdue. I'm a bit surprised more people don't run benchmarks here on Seti. Why do you think people won't believe you? But I'd wait for the new Work Units to roll out. Otherwise you'd be going to a lot of trouble only to find your hard work out of date overnight! (I've been wanting to do a test of a bunch of NVIDIA drivers on my laptop, but I'm holding back for this reason) |

HAL9000 HAL9000 Send message Joined: 11 Sep 99 Posts: 6534 Credit: 196,805,888 RAC: 57

|

When using the wikipedia pages to compare cards I just use the flops/watt figure to find the most efficient cards in the series. I will then work out their cost & cost to own to find which one I think is the best value in the performance range I want. For the electric cost I use TDP * 8.76 * cost per kw/h. The 8.76 is the number of kilowatt hours per year. You could also take 8.76 * cost per KWh to come up with a value like Alex was using. Which seems to be about $0.137 per KWh to get the $1.20 per watt per year. I get my cost per kw/h by dividing the bill amount & KWh. So if I my bill is for $53.87 & 306kwh I paid an average of $0.176 per KWh. I do this because my electric is billed in generation & transmission separately. While it might say I'm paying $0.06 per KWh for generation that isn't really my cost to power things. SETI@home classic workunits: 93,865 CPU time: 863,447 hours  Join the [url=http://tinyurl.com/8y46zvu]BP6/VP6 User Group[ Join the [url=http://tinyurl.com/8y46zvu]BP6/VP6 User Group[

|

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

Yes, you missed something important. Nobody said the 560Ti and 660Ti are similar in RAC. They aren't. The 560Ti is the hands-down winner of that race. The 560Ti will do about 16% more work than the 660Ti. It "has a right" to consume 16% more power. By one of those charts it consumes less than 10% more, peak. And the 560Ti is much cheaper to purchase. I was saying that the 560 (no Ti) is the rough equal of a 660Ti. It is much, much cheaper to purchase. Unfortunately, the 560 does not appear in the charts. The conclusion I have informally drawn for myself is that **for SETI** the 670 is like an improved 560Ti. It draws slightly less power and does more work. It also costs twice as much to purchase. The comparison you are making is between two cards that have big differences. Ok, so in your analysis you get the same "cost of ownership" over a three year period. You've also been doing 16% less work, so you have to hold the card more than an additional year, wow... doing a little extrapolating in my head, something like another year and a half to make the output/dollar truly equal. Are you going to keep your video card four and a half years? Are you sure? Won't it be cheaper to replace it than keep it if the Maxwell cards are really as efficient as NVIDIA says they will be? Or are you mostly concerned with power costs? If so, get a Kepler and crunch less, or turn your GTX 480 off at night. For me, this is the genius of Fred's measurements. All I want to know is how long it takes to do a work unit so I can compare cards with similar RAC in price. Then, and only then, do I want to know how much power I will save. Generally speaking, similar "RAC" cards burn similar amounts of electricity with *minor* improvements in efficiency moving to Kepler at peak. Why peak? Because of the way the Kepler cards self-overclock. Yes, the Kepler will burn less energy doing the same "work" if the "work" is sitting idle with a spreadsheet open. Make it crunch SETI and it goes into "boost" and almost all of that efficiency gain disappears. To me (this is just the way I think) the question boils down to, "Am I willing to spend more money now, to get similar production now, to get a payback some four or more years in the future?" My answer is "no." I'm not going to spend extra money for a nebulous benefit so far out I don't even know if I'll be around to see it. Will I be crunching SETI that many years from now? The project could go broke. I could be diagnosed with a dread disease. There might be a technology breakthrough and I will want to replace my equipment or there may be a change in the type of work SETI does. And I'm taking that risk to start saving myself $5/month in my electricity bill five years from now? That's a long time to count-on nothing changing for a minimum benefit that doesn't start accruing to me until after the warranty expires and after I've reached break-even. It takes a very, very long time for $100 more in card cost to pay for itself in power savings. If I were in Brazil with a very high cost and I had a three year equipment replacement schedule, I'd buy the most efficient thing I could find (RAC/watt)every three years (initial outlay be damned), or I wouldn't run SETI at all. I suspect the payback period is more like a year and a half, maybe two. My SSC GTX 670s are my all-time favorite cards. Period. B U T... if I look at it objectively, they were foolish purchases on a RAC/$$ (now or later) basis. Most people aren't going to make that connection and they don't care. They have one or two slots they can fill with one or two cards and their wallets are only so-thick. It's "easier" to pay an extra $6/month in electricity bills for the next five years than to fork-over $200+ more, right now. And most of them want to play games. For that, newer is better, no doubt. A nice, objective, little look at what we do here, crunch, leads to conclusions that fly in the face of what we want to believe. NVIDIA does a good job of marketing. Look at what they have done. They've taken all sorts of "heat" (no pun intended) for the power consumption of their cards. So they keep the x60Ti model number and place it the same in their pricing line-up, but produce a less capable card (for our purposes). The less capable card burns less electricity (duh). Then they invite comparisons between the two x60Ti models which are not equivalent (for our purposes). You have to compare the 560 (non-Ti) to the 660Ti. If the 660Ti is superior (it isn't) then you have to ask if it is worth the extra money. I don't think so. Everyone else can draw their own conclusions and that's just peachy. I don't feel any need to influence them. I DO hate to see confusion over performance per $ or performance per Watt and I hate to see others rationalizing their decisions based on subjective qualities when facts and figures are ascertainable. Neither of us can help that the facts don't support our prejudices (mine for the GTX 670). The challenge is to produce unassailable facts and figures. I know that's what you are trying to do, but you are making RAC assumptions that don't hold-up under scrutiny, such as it is, with the facts and figures I have today. We just have to figure-out how to conduct the test in such a way that SETI crunchers can believe the results. If those tests show I'm mistaken, I'll be the first to say, "Contrary to my earlier statements, I was surprised to find..."

|

Al  Send message Joined: 3 Apr 99 Posts: 1682 Credit: 477,343,364 RAC: 482

|

If you are serious about wanting to do this, then yes, I for one would find it very useful to see real world results. I've understood for some time that benchmarks and specs are only a baseline for comparison between different models, and the saying your mileage may vary (considerably) often applies. If you were to do this, you would provide a lot of useful real world info to those of us looking to upgrade our systems to a more efficient GPU. I cast my vote for Yes, Please.

|

|

Horacio Send message Joined: 14 Jan 00 Posts: 536 Credit: 75,967,266 RAC: 0

|

If a 670 has an RAC of ~33k and a 560 TI has an RAC of ~26k, why isn't a 660 TI producing ~33k? It's not the apps, so what is it? There are several reassons... One of them and probably the most important one is that the 670 and the 560Ti have memory buses of 256b while the 660 has a 192 bit bus, and due to that they cant use the double channel trick on the whole memory in a card with 2Gb... so the memory in the 660TI is accecessed through a thin bus and part of the memory is on single channel (so half the speed)... Of course doing only one WU at a time (using few memory) you will get the 660Ti in between the others (on crunching time), but when you optimice the usage doing several tasks, the 660TI will not scale as well as the others... Besides that the 560TI shaders run at twice the speed of the cores, while in the Kepler series the shaders run at the same speed, and while the Kepler shaders are much more optimiced, it seems that those optimizations are good mostly for games and not so much for CUDA... The last point is, the 560TI was a kind of trial for a lot of technologies that later were added in the Kepler architecture, so they were exceptionally faster than other Fermi GPUs, the 660TI instead is just another step in the market segments... That said, it doesnt means that the 560Ti is better than the 660Ti, which one is better depends on what you care about, raw RAC?, cretits/Price?, raw Power?, credits/power? Im sure the choosen one will be different in each case...

|

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

If you were to do this, you would provide a lot of useful real world info to those of us looking to upgrade our systems to a more efficient GPU. I cast my vote for Yes, Please. I am extremely busy until the latter part of January. I'll be similarly tied-up every weekend in February, but that leaves after-work time in February. As we get close to that, the tasks for us to do collectively, is find *inexpensive* (there's a limit to my curiosity) components that we will let stand as our "standard" computer. If I do this, the last thing I want is to have spent the time and effort to have some fan-boy or another scream that if we had just selected "x" memory and overclocked it to "x" that we'd have a different result. When I'm in a position to order parts and stick it all together we'll select these things more-or-less as a committee (but I get the veto since I've got to spend the money). Good gosh I was hoping to avoid Windows 8 for a while.(read: years) We'll also have to decide on an acceptable test. I think Fred's program is brilliant, but if someone can shoot holes in it, I'm open to suggestions.

|

shizaru shizaru Send message Joined: 14 Jun 04 Posts: 1130 Credit: 1,967,904 RAC: 0

|

tbret c'mon man focus:) a) 36 months + 16% is not 4 and a half years. b) You're quoting Crysis 2 benchmarks. Look at the FurMark numbers, it's the 560 TI that's "turbo boosting". @Horacio Thanx for the reply. OK I kinda get why the memory bus could come into play when running multiple WUs. But the link tbret provided to Fred's forums shows the 660 TI slower than the 560 TI at (what I think is) a single task. Could something else be coming into play? Like ROPs or something? |

|

Horacio Send message Joined: 14 Jan 00 Posts: 536 Credit: 75,967,266 RAC: 0

|

@Horacio I think that the list gives best the time at the best count for each card i.e. each time is the best (or shorter) time each GPU can reach at the optimal value for it on count. So when it says 100 sec per WU it means (for example) 3 Wus taking each 300 sec which is like one every 100sec. IIRC most GPUs get their best time with a count of 3, and I think that when the best value is under another count he shows it on the list... (but I might be wrong)

|

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

You're forgetting that the other card continues to run also, so it has to "save" enough power over some time past break-even to break even. It's not just 16%, it's also that 16% + what the card is consuming while it "catches-up" at a very slow rate. I'm saying that by the time the 600 catches the 500, the 500 has pulled away again. It isn't like the 500 stops to let the 600 catch-up.

|

|

tbret Send message Joined: 28 May 99 Posts: 3380 Credit: 296,162,071 RAC: 40

|

Alex, I have been occupied; sorry about that. The numbers in Fred's list are the "optimum" numbers. As you probably know, it tests one, two, three, four work-units running simultaneously and then reports the optimum number of simultaneous tasks and the average speed of a work unit under those conditions. So, yes, those are the "equivalent" work unit times, not an actual single work unit. Fred's tool will let you do the one at a time test if you want, it's just that most of us really want to know how to set the variable of 1/2/3/4 in order to get the most productivity from our cards, so it's written to tell us that. The 200-series cards are going to be running one work unit at a time and the Fermis, mostly, are optimized at three at a time, as are most of the Keplers, but maybe there's a 2-at-once number in there for some model. That doesn't mean that the average user is going to run multiple instances at a time. I have no idea what the average user will do. Talking about the "boost" and the power readings all over the place, I think either we're confusing two things, or we're using different words to talk about the same thing. "GPU Boost" is a feature NVIDIA added to the Kepler cards (I'm not sure that the re-badged cards... the 600-series that are really just renamed 500-series... have "GPU Boost" or not). You can go to NVIDIA's website and watch a video about GPU Boost if you'd like, but it's mostly telling "gamers" how much more wonderful their gaming experience will be due to this automatic clock adjustment. You should know that the 600 series cards are "throttled" from the factory and by using "Precision X" you can raise the target voltage of the Boost feature beyond the TDP specification for the card. So... what's the difference in that and turning down the clocks and voltage on a 560Ti to make it "appear" that you are saving electricity? Your point is well-taken about the power consumption of the 500-series cards, and that's part of why my favorites of what I own are the 670s. They are burning about the same juice as a 560Ti and doing more work. I really like mine a lot because they exhaust the heat, unlike our Gigabyte overclock 560Tis that dump it in the case. If I have an objection to the 660Tis it would be their cost. As a gaming video card they may be worth every penny, I don't know. As a cruncher, we can do better on an initial cost/"credit" basis. Once you throw-in the cost of electricity and try to compute a "cost of ownership" then you start solving for multiple variables and you really can make almost any argument you (or I) want-to by just setting the calculation up to bias the result. The first thing you have to do is determine whether your "value" is in the card + its electricity cost; or if you'd rather look at the "value" from a RAC perspective. The result of the calculation is necessarily going to be different. I was recently able to purchase an EVGA GTX 560Ti-448 Classified Ultra for $150 after a $30 rebate. That card is not anything I would have bought last year when they were "new!" and expensive, but at $150...it is a screamer. It bests my 950MHz Gigabyte 560Ti and embarrasses the SC 660Ti I paid $300 for. In our comparison, if I set the price variable on that card at $150 and we compare that to a $300 SC 660Ti the whole aesthetic of the comparison changes, if the ultimate result doesn't. Eventually the 660Ti will save enough electricity to "best" the 560Ti-448 in total cost of ownership. Eventually. "In the long run." In the long run we're all dead. Here's something we aren't even considering: You know you can generally tell, relatively, how much electricity a card is consuming by how much heat it generates. Jason has worked magic on the applications for SETI@Home by making the hardware produce as much useful work as possible (the higher RAC for optimized apps is apparent). The cards get much hotter using the optimized applications. They are burning more electricity and doing more work. That's why one of my questions is "What application do we use to make the comparisons?" I don't mean to sound argumentative. I'm only trying to expose the issues involved in making these multi-faceted comparisons. Take another example - Can someone buy two 660s and not have to buy a new $200 power supply to run them, but would have-to with two 560Tis? Can we figure-that into the RAC/dollar? Sure, it's legitimate. Where do we stop doing that? How about with air conditioning consumed or heating oil not-consumed? That's why I tend to focus on "RAC" per initial dollar, because the comparison is as free of all that ambiguous stuff as possible. And that, my friend, is the reason I'm just not a huge fan of cutting-edge in general, and I'm a detractor from the 600-series fan club in specific. I realize other people may prioritize differently and I think that's great that we all aren't identical clones.

|

|

Team kizb Send message Joined: 8 Mar 01 Posts: 219 Credit: 3,709,162 RAC: 0

|

Some great information and incite! All of the information in this thread is helping me decided which direction to take my crunchers next. @ tbret, could you run the optimum number program on your 470 to add the data to Fred's list? I'm away from my main rigs and am trying to figure out if I should finish setting up a water cooled 3x 470 rig or dump the 470s in favor of maybe one 690. I already have the cards and blocks, but would still need to buy the rads, pump and fittings. My Computers: â–ˆ Blue Offline â–ˆ Green Offline â–ˆ Red Offline |

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.