The Server Issues / Outages Thread - Panic Mode On! (119)

Message boards :

Number crunching :

The Server Issues / Outages Thread - Panic Mode On! (119)

Message board moderation

Previous · 1 . . . 8 · 9 · 10 · 11 · 12 · 13 · 14 . . . 107 · Next

| Author | Message |

|---|---|

|

Ville Saari Send message Joined: 30 Nov 00 Posts: 1158 Credit: 49,177,052 RAC: 82,530

|

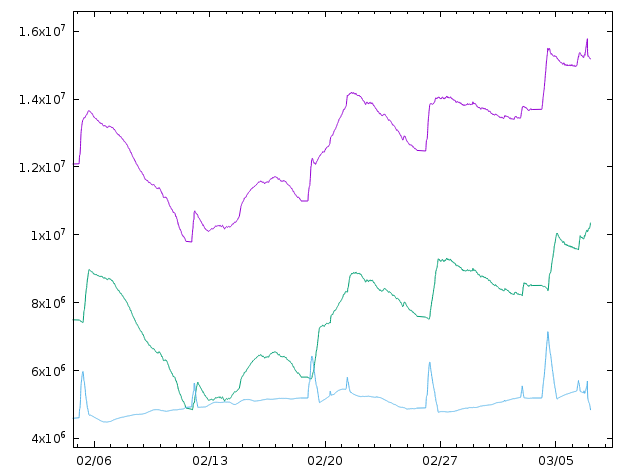

This graph shows that 'waiting for validation' on SSP really means 'waiting for validation or assimilation':  The purple curve is the validation queue size as shown on SSP. The green curve is assimilation queue size form SSP multiplied by 2.2 to scale if from workunits to results. The blue curve is their difference, i.e. the true number of results waiting for validation. The blue curve looks very much like the validation queue before the assimilation problem started. Stable around 5 million with a sharp spike just after each weekly downtime when everyone reports their results crunched during the downtime. We also see that the spike drops down as fast as it climbed up, so validation has worked fine, but simultaneously with this drop, the assimilation curve climbs up and then stays there. So the validated results get stuck in the assimilation queue. The assimilation queue descends down much slower. So slow that the next downtime hits before it has reached the level it had before the previous downtime. So every downtime pushes it higher and higher. |

|

Ville Saari Send message Joined: 30 Nov 00 Posts: 1158 Credit: 49,177,052 RAC: 82,530

|

No, they haven't, go to the very end of the listing for my MB Valid tasks and you will find, 10 tasks issued 30 Jan 2020I can't browse the listing of valid tasks alone. Only the 'all tasks' list really works. Trying to choose anything else just leaves the browser loading the page forever without ever getting anything. Even when I try to click my invalid task list that has only two tasks in it. |

|

TBar Send message Joined: 22 May 99 Posts: 5204 Credit: 840,779,836 RAC: 2,768

|

I tried it with a Host with many Valids and it's still spinning. I then tried it with a smaller number and reached a large number of WUs dated 18 Feb that all fail to open with the error, Unable to handle request can't find workunit It's just a WAG, but, I would imagine it would be difficult to Assimilate something that can't be found....I Dunno See if you can open this inside of a few minutes, https://setiathome.berkeley.edu/results.php?hostid=8097309&offset=3260&state=4 |

kittyman  Send message Joined: 9 Jul 00 Posts: 51469 Credit: 1,018,363,574 RAC: 1,004

|

LOL, I suppose that would be true. Meow. "Freedom is just Chaos, with better lighting." Alan Dean Foster

|

|

TBar Send message Joined: 22 May 99 Posts: 5204 Credit: 840,779,836 RAC: 2,768

|

Every single WU on every page? It goes on for pages, starting with Feb 29, https://setiathome.berkeley.edu/results.php?hostid=8097309&offset=3160&state=4 |

W-K 666  Send message Joined: 18 May 99 Posts: 19118 Credit: 40,757,560 RAC: 67

|

Every single WU on every page? It goes on for pages, starting with Feb 29, https://setiathome.berkeley.edu/results.php?hostid=8097309&offset=3160&state=4 That is a demonstration of progress. The listing of valid's comes from the Replica, where the task is still visible. But the workunit page comes from the master and the workunit has been purged. |

|

TBar Send message Joined: 22 May 99 Posts: 5204 Credit: 840,779,836 RAC: 2,768

|

You do realize Eric ran that script many hours ago, right? I'll give you another 2.5 hours though. Every WU older than 29 Feb... |

W-K 666  Send message Joined: 18 May 99 Posts: 19118 Credit: 40,757,560 RAC: 67

|

You do realize Eric ran that script many hours ago, right? I'll give you another 2.5 hours though. The script is probably still running and will until Eric gets up and takes a look at the progress. It is going to take some time to remove the 12 million tasks in the bloat |

|

TBar Send message Joined: 22 May 99 Posts: 5204 Credit: 840,779,836 RAC: 2,768

|

Ah, many are now gone, leaving two that are listed as Validated with a "minimum quorum 1" I wonder how many of those are still lurking around? Is it Validated or what? https://setiathome.berkeley.edu/workunit.php?wuid=3861283408 granted credit 104.20 minimum quorum : 1 initial replication : 2 Task Computer Sent Time reported Status Runtime CPUtime Credit Application 8493614556 8097309 30 Jan 2020, 17:37:30 UTC 31 Jan 2020, 10:07:46 UTC Completed and validated 259.93 244.61 104.20 SETI@home v8 v8.11 (cuda42_mac)x86_64-apple-darwin 8493614557 8743335 30 Jan 2020, 17:37:22 UTC 23 Mar 2020, 9:03:18 UTC In progress --- --- --- SETI@home v8 v8.24 (opencl_ati5_SoG_nocal)windows_intelx86Millions? |

W-K 666  Send message Joined: 18 May 99 Posts: 19118 Credit: 40,757,560 RAC: 67

|

An unknown variable. If you only have two with an RAC over 1.6 million, but I have ten with an RAC of 26,400 then it probably too difficult to make an accurate guess. But i doubt if it is anywhere near a million. edit] Not all from the same tape, just all split on the 30th Jan |

|

Grant (SSSF) Send message Joined: 19 Aug 99 Posts: 13755 Credit: 208,696,464 RAC: 304

|

Forums have almost ground to a halt. So the Scheduler should go MIA again any minute now... Edit, yep- fail, fail fail. And even the web site is barely responding. 7/03/2020 15:35:06 | SETI@home | Scheduler request failed: Couldn't connect to server 7/03/2020 15:36:58 | SETI@home | Scheduler request failed: Couldn't connect to server 7/03/2020 15:40:28 | SETI@home | Scheduler request failed: Couldn't connect to server Grant Darwin NT |

|

TBar Send message Joined: 22 May 99 Posts: 5204 Credit: 840,779,836 RAC: 2,768

|

From what I remember, the people with the AMD 5700s were cranking one out every 20 seconds or so. Those machines would be the ones to investigate. It was really quite alarming to see so many clearly False Valids being generated. |

W-K 666  Send message Joined: 18 May 99 Posts: 19118 Credit: 40,757,560 RAC: 67

|

From what I remember, the people with the AMD 5700s were cranking one out every 20 seconds or so. Those machines would be the ones to investigate. It was really quite alarming to see so many clearly False Valids being generated. I had a look to see if they were from any of the known problems or 'noise bombs' and it doesn't seem to be the case. and some of mine are blc's the others from Arecibo and all lokk like they ran full distance. |

|

Grant (SSSF) Send message Joined: 19 Aug 99 Posts: 13755 Credit: 208,696,464 RAC: 304

|

The script is probably still running and will until Eric gets up and takes a look at the progress.It's a script that is run manually by the staff, it's not a Cron job that is set to run over & over again. It's run, does it's thing, and ends. If you like, you can look at the graphs & see the effect it had. A few 100 thousand knocked off the almost 16 million total, between 22:00 to 23:00 graph time. It ran, it finished, the effect was minimal. It is going to take some time to remove the 12 million tasks in the bloatYep, months at the present rate due to the deadlines and more new work being added to the database. Grant Darwin NT |

W-K 666  Send message Joined: 18 May 99 Posts: 19118 Credit: 40,757,560 RAC: 67

|

What's the graph time re UTC? |

|

Kiska Send message Joined: 31 Mar 12 Posts: 302 Credit: 3,067,762 RAC: 0

|

What's the graph time re UTC? Time is whatever your timezone is in this case for me its: 2020-03-06T07:12:18+1100 to 2020-03-07T13:12:18+1100 You can view here: https://munin.kiska.pw/munin/static/dynazoom.html?plugin_name=Munin-Node%2FMunin-Node%2Fresults_setiathomev8_in_progress_validation&start_iso8601=2020-03-06T11%3A57%3A46%2B1100&stop_iso8601=2020-03-07T17%3A57%3A46%2B1100&start_epoch=1583439138&stop_epoch=1583547138&lower_limit=&upper_limit=&size_x=800&size_y=400&cgiurl_graph=%2Fmunin-cgi%2Fmunin-cgi-graph |

|

TBar Send message Joined: 22 May 99 Posts: 5204 Credit: 840,779,836 RAC: 2,768

|

Well, I think I'm making some progress on this. Here's a table with the v8 SSP values when I started (a couple of hours ago), for reference. And what appear to be the SQL counts that they represent. I had to line them up by eye, but I had nine rows in each block, and this is the only way they fitted.Is it possible the system doesn't know what to do with those WUs listed as Validated with a minimum quorum 1, while still having an outstanding Wingman? If that were the case there wouldn't need to be a very large number of those WUs, the Error being generated from them may be enough to slow the assimilation to a crawl. |

|

Ville Saari Send message Joined: 30 Nov 00 Posts: 1158 Credit: 49,177,052 RAC: 82,530

|

All the tasks in those valid lists that give an error when trying to click the workunit link give the error also when trying to open the task link. This is pretty clear indication that we are just observing different points of time on the list and the individual pages that come from different databases. |

|

Ville Saari Send message Joined: 30 Nov 00 Posts: 1158 Credit: 49,177,052 RAC: 82,530

|

Finally I was able to open my own valid tasks list. At the end there are just four tasks that I have received earlier than 1 Mar. Of those four two are artifacts of replica displaying old data: the result and workunit are deleted in the primary database. The remaining two are quorum 1 tasks from January that have a wingman still crunching: https://setiathome.berkeley.edu/workunit.php?wuid=3860194203 and https://setiathome.berkeley.edu/workunit.php?wuid=3861282516 Just two out of 5535 on this host doesn't look too bad. And I guess those two tasks have been assimilated. The still crunching wingman just prevents them from being deleted. So they are counted in 'waiting for db purging' on SSP. |

Richard Haselgrove  Send message Joined: 4 Jul 99 Posts: 14655 Credit: 200,643,578 RAC: 874

|

https://setiathome.berkeley.edu/workunit.php?wuid=3860194203 andYou say 'still crunching wingmen', but I doubt it. The tasks were both issued on 30 January, and have a deadline of 23 March. And both computers have contacted the servers recently - one on 5 March, and one today. I'm waiting for the task lists on each of their computers to load, but one at least is still actively crunching - the one which contacted today received new work, and has returned one of the tasks already. So, my best guess (prediction) is that these will turn out to be ghost tasks, never received and never to be crunched. They will reach deadline and time out on 23 March. What happens then, I'm less certain about. The minimum quorum of one, but initial replication of two, is an unusual combination, and we don't know exactly how the SETI daemons are programmed to cope with it. Ideally, a simple 'finished/purge', but my concern would be that the system, in its current configuration, might create and send out a replacement task. As an aside, if the deadlines had been shorter back when this workunit was created, it wouldn't still be here now. If the project was continuing, shorter deadlines would certainly reduce the damage from bumps in the road like this. |

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.