Can't report or get new tasks

Message boards :

Number crunching :

Can't report or get new tasks

Message board moderation

Previous · 1 . . . 5 · 6 · 7 · 8 · 9 · 10 · Next

| Author | Message |

|---|---|

Darrell Benvenuto Darrell Benvenuto Send message Joined: 14 Oct 00 Posts: 23 Credit: 17,457,177 RAC: 58

|

People are grabbing and returning work faster than it is being created at the moment. Because of all this activity, it gives the appearance that the servers are unavailable. Do you think this problem will go away once the Green Bank Telescope Data starts being distributed for processing? Or do you think this is due to several of the splitters bring offline at the moment? -- Darrell

|

Gary Charpentier Gary Charpentier  Send message Joined: 25 Dec 00 Posts: 30698 Credit: 53,134,872 RAC: 32

|

People are grabbing and returning work faster than it is being created at the moment. Because of all this activity, it gives the appearance that the servers are unavailable. I think the problem is the C10K issue. More crunchers than the project servers can support.

|

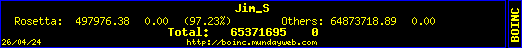

Jim_S Jim_S Send message Joined: 23 Feb 00 Posts: 4705 Credit: 64,560,357 RAC: 31

|

People are grabbing and returning work faster than it is being created at the moment. Because of all this activity, it gives the appearance that the servers are unavailable. Then we Need Another Server Drive.  I Desire Peace and Justice, Jim Scott (Mod-Ret.) |

Darrell Benvenuto Darrell Benvenuto Send message Joined: 14 Oct 00 Posts: 23 Credit: 17,457,177 RAC: 58

|

Then we Need Another Server Drive. Having another few servers on hand is pretty much always a good thing. We might be able to approach a major vendor like HP from the "charitable contribution" angle. A rack or two of fully-populated C7000 enclosures might come in pretty handy.  |

betreger betreger  Send message Joined: 29 Jun 99 Posts: 11362 Credit: 29,581,041 RAC: 66

|

Then we Need Another Server Drive. Do not hold your breath, your face will turn purple. That just ain't gonna happen. |

Gary Charpentier Gary Charpentier  Send message Joined: 25 Dec 00 Posts: 30698 Credit: 53,134,872 RAC: 32

|

People are grabbing and returning work faster than it is being created at the moment. Because of all this activity, it gives the appearance that the servers are unavailable. I think it is a bit more complicated than one box. I suspect besides new primary and secondary science servers, you will also need to seriously speed upgrade a bunch of file servers and disk drives. Might be looking at enterprise size budgets then. But before we consider that, we need to answer a basic question; are we crunching faster than data is being collected at the telescope? I think the three day outage is a pretty good indication that we are. If we are then there may be no need of more crunchers. Ergo no need of a faster server. I think that is also a reason the giga link to H/E isn't front burner. Now if you can find a rock solid DB that runs twice as fast as what they are using, and doesn't cost an arm and a leg ... then we might get ntpckr going and get on with candidate selection. And on the thread topic, have no problem uploading and reporting. Downloads failing, stuck in retry and project backoffs.

|

Darrell Benvenuto Darrell Benvenuto Send message Joined: 14 Oct 00 Posts: 23 Credit: 17,457,177 RAC: 58

|

before we consider that, we need to answer a basic question; are we crunching faster than data is being collected at the telescope? I think the three day outage is a pretty good indication that we are. You're probably right, although in the absence of an authoritative statement from Eric or one of the other project members, all we can do is make educated guesses. On the topic of telescope data, I saw it mentioned in several articles that the GBT generates 60 TB of data a day -- or, more data in a single day than Arecibo generated in an entire year. While most likely our data will be a subset of that overall stream (if the amount described in the articles is correct), even if it is only 10x more data than we had before, once that gets underway we should have no shortage whatsoever of work units to process.

|

Darrell Benvenuto Darrell Benvenuto Send message Joined: 14 Oct 00 Posts: 23 Credit: 17,457,177 RAC: 58

|

And on the thread topic, have no problem uploading and reporting. Downloads failing, stuck in retry and project backoffs. I am not experiencing this issue. I currently have a full set of workunits to process and downloads of new workunits are coming down the pipe. Slowly -- in speeds ranging from 0.89 to 3.5 KBps despite the 35 Mbps data feed here -- but still arriving in due course. On all of the machines that I have that are crunching, I have always selected the maximum 10-day workunit buffer, which helps them overcome any periodic workunit shortages. If you aren't already using the maximum value for this, you may want to increase the size of your workunit buffer.

|

rob smith  Send message Joined: 7 Mar 03 Posts: 22233 Credit: 416,307,556 RAC: 380

|

We are certainly very close to the limit, especially when the "tapes" have a lot of blocks with errors on them, or a lot of RF noise to be filtered out before splitting into WU. It must be very disheartening for them to load a "tape" and see it report 14 of 14 blocks full of errors, or, after chunking into block pass them through the RF filters as see them heavily polluted with earth-sourced RF interference so no use splitting into WU for distribution. Bob Smith Member of Seti PIPPS (Pluto is a Planet Protest Society) Somewhere in the (un)known Universe? |

tullio tullio Send message Joined: 9 Apr 04 Posts: 8797 Credit: 2,930,782 RAC: 1

|

Got six downloads, executed and reported 2, crunching other 4. Tullio |

Khangollo Khangollo Send message Joined: 1 Aug 00 Posts: 245 Credit: 36,410,524 RAC: 0

|

Then we Need Another Server Drive. I think it is pointless to invest into any kind of new hardware/data pipe without thoroughly overhauling the project software first. With servers distributing 2-minute workunits for GPUs (requiring clients to constantly download THOUSANDS of WUs just for a few day cache, contacting every 5 minutes, etc...). Even for modern mid-range CPUs, they're just way too short. And average CPU and especially GPU speed keeps increasing. It's not going to work (at all) for much longer, that's for sure. Now, I don't know much about v7, but if they intend to keep handing out such short workunits, I just don't see any hope for S@H... :(

|

Slavac Slavac Send message Joined: 27 Apr 11 Posts: 1932 Credit: 17,952,639 RAC: 0

|

Then we Need Another Server Drive. Total cost?  Executive Director GPU Users Group Inc. - brad@gpuug.org |

Fourmyle of Ceres Fourmyle of Ceres Send message Joined: 24 Jun 99 Posts: 9 Credit: 1,826,095 RAC: 0

|

I had posted a message in this thread yesterday as an additional data point: One of my 3 hosts stopped being able to communicate on Thurs 25-Jul-2011 while at least one of the others is fine. This resolved itself overnight. WU's downloaded approx 22:00 EDT Monday and began crunching again. All working fine now, after most of a week with WU's in "Downloading" state. Lesson learned (again): stuff happens. Be patient. |

Darrell Benvenuto Darrell Benvenuto Send message Joined: 14 Oct 00 Posts: 23 Credit: 17,457,177 RAC: 58

|

A rack or two of fully-populated C7000 enclosures might come in pretty handy. If they purchased them used, 16 servers in each C7000 enclosure is currently selling on eBay for around $7,000 (see link below). Four of those makes a rack - 64 Servers. But I still think HP would love to unload some of their older inventory that has little chance of selling to have it qualify as a charitable donation. http://cgi.ebay.com/HP-C7000-BLADE-ENCLOSURE-W-16X-BL460C-BLADE-SERVER-/380355605971

|

Darrell Benvenuto Darrell Benvenuto Send message Joined: 14 Oct 00 Posts: 23 Credit: 17,457,177 RAC: 58

|

For the curious, a full rack of 64 servers in four C7000 enclosures looks like this: |

jason_gee jason_gee Send message Joined: 24 Nov 06 Posts: 7489 Credit: 91,093,184 RAC: 0

|

Dumb question: What's the purpose of the glass door ? "Living by the wisdom of computer science doesn't sound so bad after all. And unlike most advice, it's backed up by proofs." -- Algorithms to live by: The computer science of human decisions. |

HAL9000 HAL9000 Send message Joined: 11 Sep 99 Posts: 6534 Credit: 196,805,888 RAC: 57

|

Dumb question: What's the purpose of the glass door ? Normally so you can see the blinky lights & keep prying hands from mucking about. SETI@home classic workunits: 93,865 CPU time: 863,447 hours  Join the [url=http://tinyurl.com/8y46zvu]BP6/VP6 User Group[ Join the [url=http://tinyurl.com/8y46zvu]BP6/VP6 User Group[

|

jason_gee jason_gee Send message Joined: 24 Nov 06 Posts: 7489 Credit: 91,093,184 RAC: 0

|

Dumb question: What's the purpose of the glass door ? Ahhh, I see, *slaps head*. That's why people keep pushing my buttons ::/ "Living by the wisdom of computer science doesn't sound so bad after all. And unlike most advice, it's backed up by proofs." -- Algorithms to live by: The computer science of human decisions. |

Wiggo Wiggo Send message Joined: 24 Jan 00 Posts: 34971 Credit: 261,360,520 RAC: 489

|

Dumb question: What's the purpose of the glass door ? And just think about the expanded steel mesh that's usually fitted these day's behind that glass (possibly to prevent to prevent those head slaps or worse maybe). :D Cheers. |

rob smith  Send message Joined: 7 Mar 03 Posts: 22233 Credit: 416,307,556 RAC: 380

|

Its a fine balance, split and crunch too fast and they will run out of "tapes" to feed into the system. Since the data is piggybacked off other projects using the Arecibo ALFA receiver S@H is at their mercy. If the other projects are not using the ALFA then no data is collected, if it is scanning the sky rapidly we get one sort of data, if it is staring at a single spot we get something else. There have been times when there have been precious few "tapes" left in the cupboard to process for various reasons. Just now it looks as if the balance between data coming in to be processed and that being processed is about right, there isn't a massive backlog of tapes, nor is the pile growing too quickly. All that a faster "splitting service" would do is empty the cache and stop production for everyone... Like others I look forward to the day when the data obtained earlier this year from the GBT is available, apparently the few hours that were collected are the equivalent data volume to months of data from the ALFA receiver... So that should keep us crunching for some time. Bob Smith Member of Seti PIPPS (Pluto is a Planet Protest Society) Somewhere in the (un)known Universe? |

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.