Need OpenCL cmd txt for new GTX 1070

Message boards :

Number crunching :

Need OpenCL cmd txt for new GTX 1070

Message board moderation

| Author | Message |

|---|---|

Cliff Harding Cliff Harding Send message Joined: 18 Aug 99 Posts: 1432 Credit: 110,967,840 RAC: 67

|

I'm thinking of replacing my 2 x EVGA GTX 750Tis with a Nvidia GTX 1070 https://www.bestbuy.com/site/nvidia-geforce-gtx-1070-founders-edition-8gb-gddr5-pci-express-3-0-graphics-card-black/5330700.p?skuId=5330700. All suggestions for the cmd line will be greatly appreciated. Right now I'm running at 2 tasks per and would like to increase them to wither 3 or 4. In the beginning it will be installed as is, but as my nickels & dimes are saved I'll be adding it to my EK cooling loop and if everything works out I'll add a 2nd one.   I don't buy computers, I build them!! |

Zalster Zalster Send message Joined: 27 May 99 Posts: 5517 Credit: 528,817,460 RAC: 242

|

I’m assuming SoG? Remember you are limited to the amount of GPU Ram available to 26% of that installed on the GPU. Do probably should stay at 2 per card.

|

Tom M Tom M Send message Joined: 28 Nov 02 Posts: 5124 Credit: 276,046,078 RAC: 462 |

Are you looking for a command line for the MB*SOG.txt? It is entirely possible that your current MB*SOG.txt file is already as good as it can get. It is also possible you need to test your Gpu's with single tasks, create a solid multi-week baseline and then see if you can raise the total production in a day with 2 tasks. I haven't had any luck at all getting more total production out of gtx 750ti's or gtx 1060 3GB by running two tasks. So far, every test seems to show the 2 tasks taking more than twice as long as one task. So total production goes down :( My impression is only Gtx 1080 and higher seem to get more total production. Might be wrong. Testing results on a specific computer/gpu combination always overrides guesses. Tom A proud member of the OFA (Old Farts Association). |

Cliff Harding Cliff Harding Send message Joined: 18 Aug 99 Posts: 1432 Credit: 110,967,840 RAC: 67

|

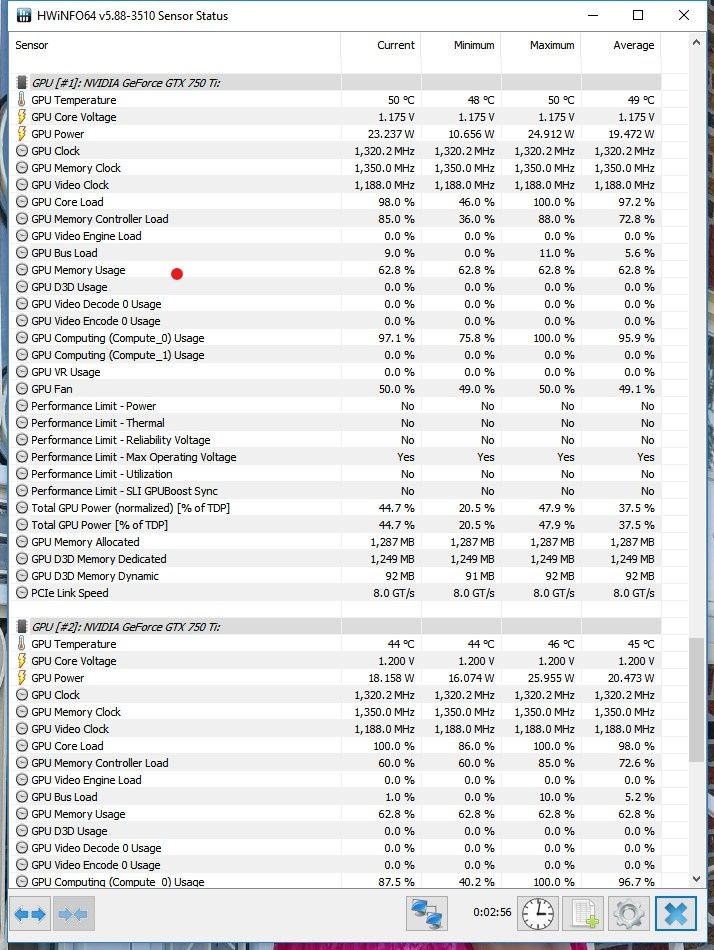

I’m assuming SoG? Remember you are limited to the amount of GPU Ram available to 26% of that installed on the GPU. Do probably should stay at 2 per card. My fault, should have made myself clear. Lunatics -beta 6, so I' running both AP & MB, both use (.5 CPU & .5 GPU). The current AP line is -use_sleep -sbs 256 -spike_fft_thresh 2048 -tune 1 64 1 4 -oclfft_tune_gr 256 -oclfft_tune_lr 16 -oclfft_tune_wg 256 -oclfft_tune_ls 512 -oclfft_tune_bn 32 -oclfft_tune_cw 32 -hp The current MB line is -use_sleep -tt 500 -hp -period_iterations_num 1 -high_perf -sbs 2048 -spike_fft_thresh 4096 -tune 1 64 1 4 -oclfft_tune_gr 256 -oclfft_tune_lr 16 -oclfft_tune_wg 256 -oclfft_tune_ls 512 -oclfft_tune_bn 64 -oclfft_tune_cw 64 Are you positive about the 26% of available GPU ram. The GPUs are 2GB each and HWinfo64 is showing    I don't buy computers, I build them!! |

Keith Myers Keith Myers Send message Joined: 29 Apr 01 Posts: 13164 Credit: 1,160,866,277 RAC: 1,873

|

You probably won't have to use -use_sleep with a 1070 nor such a low -tt value. Unless you are running a very low performance cpu. As far at the 26% of available VRAM, that is absolutely true. Has to do with the default and inviolate OpenCL compute limitation in OpenCL 1.2. If you try to use more than 26% of the VRAM via a too extreme -sbs value for example you will see that Open_CL limits it to just 2000MB of memory in the std_err.txt output of an 1070 card for example overriding your parameters. Seti@Home classic workunits:20,676 CPU time:74,226 hours   A proud member of the OFA (Old Farts Association) |

|

Marco Vandebergh ( SETI orphan ) Send message Joined: 27 Aug 10 Posts: 39 Credit: 12,630,994 RAC: 9

|

Maybe you can try my appconfig.cfg wich i use withg my 1080Ti under windows 10. I do 2 wu at a time and they finish faster than 1 at a time by approx 30 to 20 seconds, depending on wu type. ( more work done per hour ) 3 wu with this config will be about even in times when running 1 instead. For astropulse change - Unroll 28 to your cards CU count. <app_config> <app_version> <app_name>setiathome_v8</app_name> <plan_class>opencl_nvidia_SoG</plan_class> <avg_ncpus>1</avg_ncpus> <ngpus>0.5</ngpus> <cmdline>-sbs 1024 -spike_fft_thresh 4096 -tune 1 64 1 4 -oclfft_tune_gr 256 -oclfft_tune_lr 16 -oclfft_tune_wg 512 -oclfft_tune_ls 1024 -oclfft_tune_bn 128 -oclfft_tune_cw 128 -pref_wg_num_per_cu 8 -period_iterations_num 1 -hp -high_prec_timer -high_perf -tt 1500 -cpu_lock -instances_per_device 3</cmdline> </app_version> <app_version> <app_name>astropulse_v7</app_name> <avg_ncpus>1</avg_ncpus> <ngpus>1</ngpus> <plan_class>opencl_nvidia_100</plan_class> <cmdline>-sbs 1024 -unroll 28 -oclFFT_plan 256 16 256 -ffa_block 16384 -ffa_block_fetch 8192 -tune 1 64 4 1 -tune 2 64 4 1 -hp -cpu_lock -instances_per_device 3</cmdline> </app_version> <app_version> <app_name>astropulse_v7</app_name> <avg_ncpus>1</avg_ncpus> <ngpus>1</ngpus> <plan_class>cuda_opencl_100</plan_class> <cmdline>-sbs 1024 -unroll 28 -oclFFT_plan 256 16 256 -ffa_block 16384 -ffa_block_fetch 8192 -tune 1 64 4 1 -tune 2 64 4 1 -hp -cpu_lock -instances_per_device 3</cmdline> </app_version> <app_version> <app_name>astropulse_v7</app_name> <avg_ncpus>1</avg_ncpus> <ngpus>1</ngpus> <plan_class>opencl_nvidia_cc1</plan_class> <cmdline>-sbs 1024 -unroll 28 -oclFFT_plan 256 16 256 -ffa_block 16384 -ffa_block_fetch 8192 -tune 1 64 4 1 -tune 2 64 4 1 -hp -cpu_lock -instances_per_device 3</cmdline> </app_version> <app_version> <app_name>astropulse_v7</app_name> <avg_ncpus>1</avg_ncpus> <ngpus>1</ngpus> <plan_class>cuda_opencl_cc1</plan_class> <cmdline>-sbs 1024 -unroll 28 -oclFFT_plan 256 16 256 -ffa_block 16384 -ffa_block_fetch 8192 -tune 1 64 4 1 -tune 2 64 4 1 -hp -cpu_lock -instances_per_device 3</cmdline> </app_version> </app_config> |

Cliff Harding Cliff Harding Send message Joined: 18 Aug 99 Posts: 1432 Credit: 110,967,840 RAC: 67

|

Gave myself an early birthday present, Saturday, and installed the 1st of 2 GTX1070. Up and running, and will check after I get back from voting machine inspector class.   I don't buy computers, I build them!! |

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.