Panic Mode On (92) Server Problems?

Message boards :

Number crunching :

Panic Mode On (92) Server Problems?

Message board moderation

Previous · 1 . . . 9 · 10 · 11 · 12 · 13 · 14 · 15 . . . 23 · Next

| Author | Message |

|---|---|

|

Aurora Borealis Send message Joined: 14 Jan 01 Posts: 3075 Credit: 5,631,463 RAC: 0

|

I've been crunching mostly Beta since last week since it has a 3 to 1 ratio with main. Last night I ran out of Bata work, so BOINC started crunching my reserve cache of 80 WU from main. Now down 60 WU including 28 resend (3 AP), I pickup overnight. I figure I've still got another 8 to 10 hrs before my GPU falls back on Milkyway and Einstein. EDIT: Got a full (50 WU) cache from BETA, so I'm good for 36 hrs. Boinc V7.2.42 Win7 i5 3.33G 4GB, GTX470 |

cliff cliff Send message Joined: 16 Dec 07 Posts: 625 Credit: 3,590,440 RAC: 0

|

Well my lucks in today, just got another 2 MB tasks.. Last lot were resends, but a WU is a WU just the same:-) Regards, Cliff, Been there, Done that, Still no damm T shirt!

|

KWSN Ekky Ekky Ekky KWSN Ekky Ekky Ekky Send message Joined: 25 May 99 Posts: 944 Credit: 52,956,491 RAC: 67

|

+1 Well my lucks in today, just got another 2 MB tasks.. Last lot were resends, but a WU is a WU just the same:-)

|

Julie Julie Send message Joined: 28 Oct 09 Posts: 34053 Credit: 18,883,157 RAC: 18

|

|

|

Josef W. Segur Send message Joined: 30 Oct 99 Posts: 4504 Credit: 1,414,761 RAC: 0

|

Update on my Beta crunching: my i7 crunched through all the cuda32s and cuda42s (and a few cuda50s) it was sent, and got sent more 32s. Looking at the processing times, though, it seems to my amateur eye like the 42s were more efficient. Does the scheduler think differently than I do? (Probably...) The Average processing rate (APR) shown on the Application details for host 66539 is 72.81 GFLOPS for CUDA32 and 64.93 GFLOPS for CUDA42. That's the basis on which the Scheduler logic considers CUDA32 faster. However, the Scheduler choice of which to send has a random factor applied. It's based on the normal distribution so is usually small, and it is further scaled down by the number of completed tasks in the host average. The idea is that the host averages will get more reliable as more tasks are averaged in. But the host averages are calculated using exponential smoothing such that about half the average is based on the most recent 69 values, so they can actually vary considerably for GPU processing. The "GFLOPS" are derived from the estimate of floating point operations the splitters provide. For SaH v7 those estimates are based on angle range (AR), and are a compromise between how AR affects processing on CPU or GPU. That compromise means that very high AR "shorties" provide a lower APR than normal midrange AR tasks when processed on GPU. It looks like all the CUDA42 tasks you got were shorties but CUDA32 has gotten a mix. Perhaps the next time the random factor makes the Scheduler send CUDA42 you'll get a batch of midrange AR tasks which will increase the APR for those. Joe |

jason_gee jason_gee Send message Joined: 24 Nov 06 Posts: 7489 Credit: 91,093,184 RAC: 0

|

Update on my Beta crunching: my i7 crunched through all the cuda32s and cuda42s (and a few cuda50s) it was sent, and got sent more 32s. Looking at the processing times, though, it seems to my amateur eye like the 42s were more efficient. Does the scheduler think differently than I do? (Probably...) A sidenote perhaps interesting to some: In addition to the averages being volatile with work mix and other aspects ignoring any hardware or app change not managed, the estimate mechanism already contains several 'noisy' inputs with various offsets/scales and variances in practice. Those sources of noise easily swamp those random factors inducing chaotic behaviour and making those random offsets more or less redundant. "Living by the wisdom of computer science doesn't sound so bad after all. And unlike most advice, it's backed up by proofs." -- Algorithms to live by: The computer science of human decisions. |

betreger betreger  Send message Joined: 29 Jun 99 Posts: 11361 Credit: 29,581,041 RAC: 66

|

And now we have less than 900K MBs out in the field. |

|

David S Send message Joined: 4 Oct 99 Posts: 18352 Credit: 27,761,924 RAC: 12

|

Update on my Beta crunching: my i7 crunched through all the cuda32s and cuda42s (and a few cuda50s) it was sent, and got sent more 32s. Looking at the processing times, though, it seems to my amateur eye like the 42s were more efficient. Does the scheduler think differently than I do? (Probably...) Thanks, gentlemen. David Sitting on my butt while others boldly go, Waiting for a message from a small furry creature from Alpha Centauri. |

|

David S Send message Joined: 4 Oct 99 Posts: 18352 Credit: 27,761,924 RAC: 12

|

I think they may have disabled or changed the built in degradation part of RAC. Normally it should drop at least once a week, but I have machines that have had their RAC flat for ~3 weeks. My RAC is continuing to fall. David Sitting on my butt while others boldly go, Waiting for a message from a small furry creature from Alpha Centauri. |

Wiggo Wiggo Send message Joined: 24 Jan 00 Posts: 34744 Credit: 261,360,520 RAC: 489

|

I've received 11 resends so far today, including a V7 AP. :-D Cheers. |

cliff cliff Send message Joined: 16 Dec 07 Posts: 625 Credit: 3,590,440 RAC: 0

|

Hi Wiggo, Yeah I got a whole 1 AP resend:-) However AP's don't seem to be getting validated, since the AP validator is seemingly offline.. Guess that's no surprise considering the AP database problems. But I still have a couple of MB tasks waiting in the wings:-) Regards, Cliff, Been there, Done that, Still no damm T shirt!

|

Jeff Buck Jeff Buck  Send message Joined: 11 Feb 00 Posts: 1441 Credit: 148,764,870 RAC: 0

|

I notice that the SSP seems to cough up this hairball, Warning: number_format() expects parameter 1 to be double, string given in /disks/carolyn/b/home/boincadm/projects/sah/html/seti_boinc_html/sah_status.php on line 417 any time the "Results received in last hour" for AP drops to zero. Perhaps a "divide by zero" type of error that's now showing up as we start to hit bottom. |

cliff cliff Send message Joined: 16 Dec 07 Posts: 625 Credit: 3,590,440 RAC: 0

|

This lark is getting beyond a joke now, not only is S@H effectively down for work, but a darn backup project has now gone down as well.. Maybe its server flu or summat.. going the rounds from one project to the next.. Regards, Cliff, Been there, Done that, Still no damm T shirt!

|

Mike Mike Send message Joined: 17 Feb 01 Posts: 34258 Credit: 79,922,639 RAC: 80

|

Enabled work fetch on Einstein. No work for my GPU for hours and my CPU is almost freezing while crunching . Wont happen anymore. With each crime and every kindness we birth our future. |

Richard Haselgrove  Send message Joined: 4 Jul 99 Posts: 14650 Credit: 200,643,578 RAC: 874

|

Enabled work fetch on Einstein. All of mine are fetching, crunching and reporting as requested. http://einstein.phys.uwm.edu/hosts_user.php?userid=144054 Check that your preferences are up-to-date for the applications you were expecting to crunch. |

Mike Mike Send message Joined: 17 Feb 01 Posts: 34258 Credit: 79,922,639 RAC: 80

|

Enabled work fetch on Einstein. Richard i`m no newbe. 27.11.2014 23:17:17 Einstein@Home update requested by user 27.11.2014 23:17:21 Einstein@Home [sched_op] Starting scheduler request 27.11.2014 23:17:21 Einstein@Home Sending scheduler request: Requested by user. 27.11.2014 23:17:21 Einstein@Home Requesting new tasks for CPU and ATI GPU 27.11.2014 23:17:21 Einstein@Home [sched_op] CPU work request: 1.00 seconds; 0.00 CPUs 27.11.2014 23:17:21 Einstein@Home [sched_op] ATI GPU work request: 1.00 seconds; 1.00 GPUs 27.11.2014 23:17:23 Einstein@Home Scheduler request completed: got 0 new tasks 27.11.2014 23:17:23 Einstein@Home [sched_op] Server version 611 27.11.2014 23:17:23 Einstein@Home No work sent 27.11.2014 23:17:23 Einstein@Home see scheduler log messages on http://einstein5.aei.uni-hannover.de/EinsteinAtHome/host_sched_logs/3647/3647123 27.11.2014 23:17:23 Einstein@Home Jobs for CPU are available, but your preferences are set to not accept them With each crime and every kindness we birth our future. |

|

bluestar Send message Joined: 5 Sep 12 Posts: 7031 Credit: 2,084,789 RAC: 3 |

From the Server Status page: http://setiathome.berkeley.edu/sah_status.html Results ready to send: 3 Apparently it became 0 once again. Yes, I know about the current problems. http://setiathome.berkeley.edu/forum_thread.php?id=76174&postid=1605524 Please click on the links there will you? I guess you all missed what he said. |

|

Claggy Send message Joined: 5 Jul 99 Posts: 4654 Credit: 47,537,079 RAC: 4

|

Richard i`m no newbe. You are in this case, you're running a Boinc without OpenCL detection: http://einstein5.aei.uni-hannover.de/EinsteinAtHome/host_sched_logs/3647/3647123 2014-11-27 22:24:31.7574 [PID=28773] Request: [USER#xxxxx] [HOST#3647123] [IP xxx.xxx.xxx.3] client 6.12.34 Claggy |

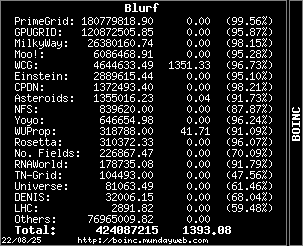

Blurf Blurf Send message Joined: 2 Sep 06 Posts: 8962 Credit: 12,678,685 RAC: 0

|

I'm hoping the donations earned from crunching at BU will allow for some new hardware purchases.   |

Jord Jord Send message Joined: 9 Jun 99 Posts: 15184 Credit: 4,362,181 RAC: 3

|

Still got 20 tasks in cache. It helps that I'm being maimed to death by killer eagles in Far Cry 4 most of the day. :) |

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.