Longer MB tasks are here

Message boards :

Number crunching :

Longer MB tasks are here

Message board moderation

Previous · 1 · 2 · 3 · 4 · Next

| Author | Message |

|---|---|

Steve Dodd Steve Dodd Send message Joined: 29 May 99 Posts: 23 Credit: 8,695,373 RAC: 1

|

Word of caution when considering upgrading CUDA drivers. There are problems running GPUGRID work units with the new version NVidia drivers under Windows XP (32-bit). Works fine for 64-bit.

|

popandbob popandbob Send message Joined: 19 Mar 05 Posts: 551 Credit: 4,673,015 RAC: 0

|

This may be whistling in the wind, but we have yet to see what effect CUDAv2.3 has on VLAR crunch times... The VLAR's are just as slow (if not slower) than they were before... I did a stand alone test and after 20 min task was only 0.35% done. Bob  Do you Good Search for Seti@Home? http://www.goodsearch.com/?charityid=888957 Or Good Shop? http://www.goodshop.com/?charityid=888957 |

|

Fred W Send message Joined: 13 Jun 99 Posts: 2524 Credit: 11,954,210 RAC: 0

|

This may be whistling in the wind, but we have yet to see what effect CUDAv2.3 has on VLAR crunch times... Thanks, Bob. I'll keep re-branding them to run on the CPU then :) F.

|

Pappa Pappa Send message Joined: 9 Jan 00 Posts: 2562 Credit: 12,301,681 RAC: 0

|

Zen First have your Techno person get a copy of XP SP3 (Burn a CD) for XP. SP 3 did correct a couple of file system issues. A quick look shows you have ten machines (including the Vista/win7 boxes). 6 are at XP SP 2. Identifying which one of the AMD machines is a bit tough (the 64-3200 falls out). The fact that you are stuck at 6.4.5 or 6.4.7 is another indicator that SP 3 might be needed. If you could indentify the machine such as {url]http://setiathome.berkeley.edu/show_host_detail.php?hostid=4841541[/url] iit would also help. Regards [quote]Zen Please consider a Donation to the Seti Project. |

|

zpm Send message Joined: 25 Apr 08 Posts: 284 Credit: 1,659,024 RAC: 0

|

i can't tell if these are longer as my cuda card is burning through them in 10 minutes... march 10th and sep19 i'll let my gtx 260 run back to gpugrid and let the slower cards take seti work.  I recommend Secunia PSI: http://secunia.com/vulnerability_scanning/personal/ Go Georgia Tech. |

popandbob popandbob Send message Joined: 19 Mar 05 Posts: 551 Credit: 4,673,015 RAC: 0

|

zpm if your using 2.3 dll's you have about a 2x speed up so you wont see a difference. Bob Edit: for the GTX260  Do you Good Search for Seti@Home? http://www.goodsearch.com/?charityid=888957 Or Good Shop? http://www.goodshop.com/?charityid=888957 |

|

zpm Send message Joined: 25 Apr 08 Posts: 284 Credit: 1,659,024 RAC: 0

|

zpm if your using 2.3 dll's you have about a 2x speed up so you wont see a difference. i've got driver 190.38 but haven't messed with any dll files. don't have 2.3 access... they haven't officially released it so i'm not going to go messing with it. |

|

Cosmic_Ocean Send message Joined: 23 Dec 00 Posts: 3027 Credit: 13,516,867 RAC: 13

|

Hello, shipmates, I have always only done SETI, and I've had that message show up before. Somewhere in BOINC client upgrades the 'on_frac' in client_state.xml got messed up and decided "BOINC runs 0.1% of the time, computation enabled 99.7% of that". My solution was to just ignore it. Went away after a few WUs. Linux laptop: record uptime: 1511d 20h 19m (ended due to the power brick giving-up) |

|

Filipe Send message Joined: 12 Aug 00 Posts: 218 Credit: 21,281,677 RAC: 20

|

Can´t we also improve the science done in the AP tasks? reducing the networks problems |

Richard Haselgrove  Send message Joined: 4 Jul 99 Posts: 14650 Credit: 200,643,578 RAC: 874

|

Word of caution when considering upgrading CUDA drivers. There are problems running GPUGRID work units with the new version NVidia drivers under Windows XP (32-bit). Works fine for 64-bit. I have run GPUGrid under 32-bit XP SP3 with the 190.38 drivers: no problems at all. There are problems with Linux drivers, apparently (no personal experience of that) but this 32-bit Windows 'issue' sounds like a myth here. |

|

Miklos M. Send message Joined: 5 May 99 Posts: 955 Credit: 136,115,648 RAC: 73

|

I have also noticed that the most recent downloads are going to take up to 5x as long to complete as most of the previous wu's, on my older computers, but sadly, the credit expected in the pending box is about half as much as before. Thus my RAC on this machine maybe dropping from 350 to 180. I wonder why the sudden change. I welcome longer units, especially if they solve some of the problems of backlogs, but the less credit, hmmm. |

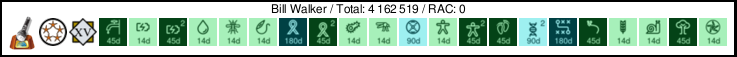

Bill Walker Bill Walker Send message Joined: 4 Sep 99 Posts: 3868 Credit: 2,697,267 RAC: 0

|

You might want to wait until you get the actual credits. My first two long MBs are now on the credit pending list (thanks to Matt for turning it back on), and a rough mental calculation shows about the same credits per hour as the old shorter WUs. Old WUs were 40 to 50 credits each for 1:00 to 1:15 CPU time, new longer ones are about 90 credits pending, for just over 2:00. Also, these new WUs first showed up with a predicted run time of around 2:45, but are actually completing in about 2:10. On a slightly different topic, I see that all my granted credits in the list are now EXACTLY the same as the claimed credit, before I always expected about 80% to 90% of the claimed value to be granted. Is this a change in how SETI is calculating and assigning credits?

|

|

Fred W Send message Joined: 13 Jun 99 Posts: 2524 Credit: 11,954,210 RAC: 0

|

Sounds to me like Boinc might think that Climate Prediction is in deadline trouble. Have you tried suspending the CPDN model(s) temporarily to see if that will enable S@H to fetch work? F.

|

Richard Haselgrove  Send message Joined: 4 Jul 99 Posts: 14650 Credit: 200,643,578 RAC: 874

|

Sounds to me like Boinc might think that Climate Prediction is in deadline trouble. Have you tried suspending the CPDN model(s) temporarily to see if that will enable S@H to fetch work? Sounds more like the known bug in BOINC versions around v6.6.36 +/- They're working on a fix - first draft in v6.6.38 - but from reports it doesn't sound as if they've got it right yet (at least, they believe this part of it is working, but the messages concerning other refinements are confusing people). |

|

Aurora Borealis Send message Joined: 14 Jan 01 Posts: 3075 Credit: 5,631,463 RAC: 0

|

Hello, shipmates, Can't check what Boinc version you're using since your computer information is hidden... My guess is that you are using V6.6.36 or .37 and you are attached to several project. These versions have a flawed work fetch scheduler. The last version that I know for sure doesn't have this problem is V6.6.31. Version 6.6.38 seems to have a fix also, but I found it had a worse problem with the change in the upload back off code messing up the GUI. |

S@NL - Eesger - www.knoop.nl S@NL - Eesger - www.knoop.nl Send message Joined: 7 Oct 01 Posts: 385 Credit: 50,200,038 RAC: 0

|

@Pasi Karonen, To make thing (maybe) a bit more odd: It is enough to suspend just one task. If you have more then one, the other one(s) will nicely run according to your settings. Also, I have the distinct impression that if one task is completed and reported you can 'reactivate' that one suspended task.. At least this seemed to be the case at my end. Back on topic, since the 'pending page' is up again, I have discovered that I have processed (at least) three of them requesting more then 100 credits: one (with an error of my first wingman) two three Also found one completed (there are most likely more, but I haven't searched any further) All are done with the AK optimized client, so all seems quite ok :D [edit] hmm found something strange, CUDA seems to claim quite a bit more credit then my CPU-AK-config: one two [/edit] The SETI@Home Gauntlet 2012 april 16 - 30| info / chat | STATS |

|

Josef W. Segur Send message Joined: 30 Oct 99 Posts: 4504 Credit: 1,414,761 RAC: 0

|

The transition wasn't perfect, another confirmation of Murphy's Law. There were two parts to the change: the new value for chirp_resolution in the analysis_config.xml template, and a change in the splitter code to adjust estimates and deadlines based on the ratio of the old standard 0.333 resolution to actual chirp_resolution. Had the revised splitter code been activated before or simultaneously to the template change, all would have been as intended. But at least some work was produced with the older splitter code and the new template. I got 7 such tasks around 14:00 UTC July 22 on my host 1418195. They were meant to be around 30 hours of work, but of course when the first one took twice as long as had been estimated DCF (Duration Correction Factor) jumped up and the others were re-estimated to about the right length. The next work request last night got tasks from an adjusted splitter but of course the high DCF meant it showed estimated crunch time about twice reality. None of this affects science, the revised chirp_resolution is handled by the application as intended. Nor does it have any significant effect on credits, the application flopcount is about doubled since there are about twice as many analyses. It may put a slight kink in the server-side credit multiplier sequence since that involves crunch time vs. estimates, but the 30 day averaging should keep the effect quite small. Hosts which have a short cache have probably all gone through the sequence, and for the faster ones the DCF has probably worked back down to its normal range. Hosts with a moderately long cache may not have seen any of the effects yet. Hosts with a really long cache which makes VHAR 'shorties' run in Eareliest Deadline First order may get one jump in DCF from the VHAR work and another a few days later when midrange or VLARs are crunched. Some few of those misestimated WUs will eventually be reissued, they have the old 7 to 31 day deadlines... Joe |

Richard Haselgrove  Send message Joined: 4 Jul 99 Posts: 14650 Credit: 200,643,578 RAC: 874

|

Thanks Joe, I thought I'd been seeing something a bit odd but hadn't had the persevereance to get to the bottom of it. Just for a moment I thought my Q9300 had dropped to half speed just as I installed the new nVidia drivers, but no - double time got double credit and still validated, so I guessed it was new chirps with old deadlines. Edit - a lot of the ones I did check were like task 1313459325, with an AR in the 0.2 band - unusual enough that I don't have a mental note of how long they should take. @ MarkJ, This may explain why I thought we would need to change the CUDA flops estimates with the 2.3 DLLs, but you didn't see any need. Let's re-check next week, when the anomalous ones have worked through. |

Lint trap Lint trap Send message Joined: 30 May 03 Posts: 871 Credit: 28,092,319 RAC: 0

|

Now that the community in general is pretty much into the longer MB wu's and crunching them, I don't suppose there are any thoughts about converging AP code and MB code into a single workunit? I think one "super-wu" should use all available client processors exclusively. So, a quad-core machine with one CUDA GPU would have a "super-wu" crunching on 5 processors simultaneously. The project apps would have to divide the wu accordingly. Using all processors simultaneously would be most efficient, as long as there is code that can't run in both CPU and GPU (AP). Perhaps super-crunching machines, with double-digit cores and multiple CUDA processors, could work two super-wu's simultaneously. I don't really care how long a workunit takes to crucnch on my machine, it's not worth worrying about. However, as crunching times get longer, a credit estimate figure under the Tasks tab in BOINC, based on the # of flops crunched so far, updated every 1,000 or 10,000 or something flops, might be helpful. I suppose AP specific code would have to be restricted to CPU execution until it has been reworked to run in a GPU environment, but that should be doable. The advantages would be that all the science work gets done at once for each sky point, less d/b loading and less d/b maintenance for seperate AP/MB queues/tables/etc., and less TCP loading (again fewer uploads/downloads). I know I started to ramble on there...but hey, it's me :-) ??? Martin |

James Sotherden James Sotherden Send message Joined: 16 May 99 Posts: 10436 Credit: 110,373,059 RAC: 54

|

Well ive crunched a few of the longer Wu's on my P4 running op apps, And I must say they pay pretty well. depending how long they took the credit is 78 up to 113. Some work said 7;5 hours some 8 and im getting some 10 and 11 hour to completion work now. Would that be the time completion factor resetting itself? I have some on the mac that say 4.5 hours to crunch but im still doing aug dates yet. What ever Eric did sure worked, no problems getting or sending work this weekend at all. Well done Eric and crew.  [/quote] [/quote]Old James |

©2024 University of California

SETI@home and Astropulse are funded by grants from the National Science Foundation, NASA, and donations from SETI@home volunteers. AstroPulse is funded in part by the NSF through grant AST-0307956.